1. What is machine learning, and how is it different from traditional programming?

Ans:

Machine learning is the process of enabling computers to recognize patterns in data and make predictions or decisions without explicit instructions for every scenario. Unlike traditional programming, where step-by-step logic is manually coded, machine learning algorithms learn from examples. This allows them to perform complex tasks like classification, regression, and clustering tasks that would be difficult or impractical to program manually.

2. What are the main types of machine learning, and when are they used?

Ans:

Machine learning can be categorized into three major types. Supervised learning uses labeled data to map inputs to outputs and is applied in tasks such as classification and regression. Unsupervised learning works with unlabeled data to identify hidden patterns, groupings, or structures. Reinforcement learning involves an agent learning through rewards or penalties, suitable for sequential decision-making problems like robotics, gaming, or autonomous systems.

3. How should missing or corrupted data be handled before training a model?

Ans:

Preparing clean and reliable data is essential for effective model training. Missing or corrupted values can be addressed by removing rows or columns with excessive gaps, imputing values using statistical methods such as mean, median, or mode, or applying predictive techniques like KNN-based imputation. After cleaning, additional preprocessing like normalization, scaling, and encoding categorical variables ensures the dataset is in a suitable format for model training.

4. What is a confusion matrix, and why is it important for classification?

Ans:

A confusion matrix is a tabular tool that evaluates the performance of a classification model by comparing predicted outcomes with actual results. It includes True Positives (correct positive predictions), True Negatives (correct negative predictions), False Positives (incorrect positive predictions), and False Negatives (missed positive cases). This structure helps calculate metrics such as accuracy, precision, recall, and F1-score, providing insight into the types of errors a model makes.

5. Can you explain the bias-variance tradeoff in machine learning?

Ans:

The bias-variance tradeoff is the balance between two types of prediction errors. High bias occurs when a model is too simple, leading to underfitting and failure to capture underlying trends. High variance happens when a model is overly complex, overfitting the training data and performing poorly on new inputs. Achieving the right balance ensures the model captures meaningful patterns while generalizing effectively to unseen data.

6. What is regularization, and why is it used?

Ans:

Regularization is a technique used to reduce overfitting by penalizing model complexity. It discourages the model from fitting noise in the training data, ensuring better generalization to new data. Common methods include L1 regularization (Lasso), which encourages sparsity, and L2 regularization (Ridge), which reduces the magnitude of weights. Regularization helps maintain model stability while improving predictive performance.

7. How do you decide which machine learning algorithm to use for a problem?

Ans:

Selecting the right algorithm depends on factors such as the type of data (labeled or unlabeled), the problem objective (classification, regression, clustering), dataset size and complexity, computational resources, and the need for interpretability versus accuracy. For simple datasets, algorithms like linear regression or decision trees may suffice, whereas complex data, including images or text, often require neural networks or deep learning. Understanding the data and task is crucial for choosing an effective model.

8. What is cross-validation, and why is it important in model evaluation?

Ans:

Cross-validation is a method for estimating a model’s ability to generalize to unseen data. It involves splitting the dataset into multiple folds, training the model on some folds, and validating on the remaining folds. This process is repeated so each fold serves as a validation set once. Averaging the results provides a robust performance estimate, reduces the risk of overfitting, and ensures the model’s reliability.

9. What are feature engineering and feature selection, and how do they improve models?

Ans:

Feature engineering is the process of creating or transforming variables to make them more informative, such as deriving age from a date of birth. Feature selection focuses on identifying and retaining only the most relevant features, eliminating noise and simplifying the model. Combined, these practices improve predictive accuracy, reduce overfitting, and make model training more efficient by emphasizing meaningful input data.

10. How is deep learning different from traditional machine learning?

Ans:

Deep learning differs from traditional machine learning in several ways. It uses multi-layered neural networks to automatically extract features from raw data, while traditional ML often requires manual feature engineering. Deep learning excels at complex tasks such as image recognition, speech processing, and natural language understanding. Although it requires larger datasets and more computing power, it effectively handles unstructured and highly complex data that traditional methods struggle with.

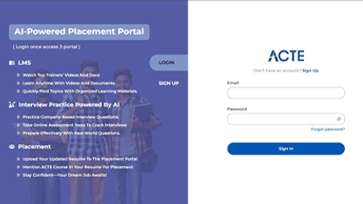

LMS

LMS