1. What distinguishes AI, ML, and Deep Learning from each other?

Ans:

Artificial Intelligence (AI) is the broad discipline focused on designing machines capable of performing tasks that require human-like reasoning. Machine Learning (ML) is a subset of AI where models automatically identify patterns and make predictions from data. Deep Learning is a specialized area within ML that uses layered neural networks to handle complex tasks such as image recognition, speech interpretation, and natural language understanding.

2. Can you explain supervised, unsupervised, and reinforcement learning with examples?

Ans:

Supervised learning uses labeled data to train models for prediction, such as forecasting product demand. Unsupervised learning works on unlabeled data to find hidden structures or clusters, like segmenting customers for targeted marketing campaigns. Reinforcement learning trains an agent to make decisions by learning from rewards and penalties, for example, improving autonomous vehicle navigation through iterative trial-and-error.

3. What approaches help prevent overfitting in ML models?

Ans:

Overfitting occurs when a model performs well on training data but poorly on new inputs. Techniques to avoid overfitting include cross-validation, L1/L2 regularization, dropout layers in neural networks, pruning decision trees, increasing dataset size, and data augmentation. These methods ensure that models generalize effectively to unseen data.

4. What is the bias-variance tradeoff in machine learning?

Ans:

The bias-variance tradeoff represents the balance between a model being too simple (high bias) and being overly sensitive to training data (high variance). High bias causes underfitting, while high variance leads to overfitting. Techniques such as ensemble learning, regularization, and cross-validation help maintain a proper balance, minimizing overall prediction errors.

5. Which metrics are used to evaluate the performance of classification models?

Ans:

Classification models are typically evaluated using metrics such as accuracy, precision, recall, F1-score, and AUC-ROC. These metrics provide insights into model performance, highlighting strengths and weaknesses. The choice of metric depends on the use case, for instance, recall is critical in healthcare applications to minimize false negatives.

6. Why are activation functions important in neural networks?

Ans:

Activation functions introduce non-linearity in neural networks, allowing them to model complex patterns in the data. Common activation functions include ReLU, which facilitates fast learning in deep networks, Sigmoid for generating probabilities, and Tanh for scaling outputs between -1 and 1. Without them, neural networks would behave like linear models and fail to capture intricate relationships.

7. How do you choose the best algorithm for a machine learning problem?

Ans:

Algorithm selection depends on data type, dataset size, project goals, interpretability, and desired accuracy. Linear regression works well for structured numerical data, ensemble algorithms like Random Forest or XGBoost provide robust performance for tabular datasets, and deep learning is ideal for unstructured data such as images, audio, or text.

8. What is Gradient Descent, and what are its variations?

Ans:

Gradient Descent is an optimization technique that minimizes a model’s loss function by iteratively adjusting parameters in the opposite direction of the gradient. Its types include Batch Gradient Descent (using all data), Stochastic Gradient Descent (updating per sample), and Mini-batch Gradient Descent (updating with data subsets). Adaptive optimizers like Adam improve convergence speed and stability.

9. What challenges arise during AI/ML deployment in production?

Ans:

Deploying models can face challenges such as data drift, scaling difficulties, latency constraints, interpretability issues, and continuous performance monitoring. Solutions include retraining models with new data, containerizing using Docker, version controlling models, and using monitoring tools like MLflow or Prometheus to maintain reliability and efficiency.

10. Can you describe a real-world AI/ML project and its outcomes?

Ans:

In a predictive maintenance project, sensor data was used to forecast equipment failures. Challenges like missing data, imbalanced datasets, and feature selection were addressed with data imputation, SMOTE, and feature engineering. The project successfully reduced equipment downtime by 20% and optimized maintenance schedules, improving operational efficiency.

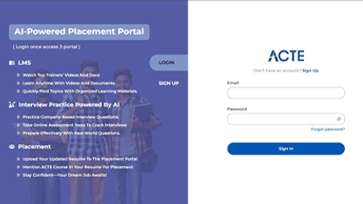

LMS

LMS