As the demand for Big Data grows, organisations are already on the lookout for Hadoop specialists. Because we are the top Hadoop training institution in Mumbai, we teach you all you need to know to become an expert in Hadoop. The key features of our Hadoop training include comprehending Hadoop and Big Data, Hadoop Architecture and HDFS, and the function of Hadoop components, as well as integrating R and NoSQL with Hadoop. Both beginners and experts can benefit from our Hadoop courses in Mumbai. Your lessons will prepare you for a variety of Hadoop positions that pay anywhere from 4 lakhs to 16 lakhs per year in average salaries.

Additional Info

Big data is comprised of five vital components

By industry experts, big data is typically described by the 5 Vs, which should be addressed separately, but in relation to the other pieces.

Volume:- Prepare a plan for how and where the data will be stored, as well as the amount of data needed.

Variety:- Analyze all the sources of data that are involved within an ecosystem and learn how to incorporate those sources into the system.

Velocity:- Today's businesses rely heavily on speed. The big data picture should be developed in real-time by deploying the right technologies.

Veracity:- When you put garbage in, you get garbage out, so make your data accurate and up to date. Use a big data system to surface actionable business intelligence in an easy-to-understand manner using gathered environmental data.

Virtue:- all regulations for data privacy, privacy protection, and compliance need to be addressed as well when using big data.

What makes big data so important?

We live in a digital world where consumers expect immediate results. Modern cloud-based business world deals with digital sales transactions, marketing feedback, and refinements at a blistering pace. Data is produced and compiled at a rapid rate in all of these transactions. It is important to put this information to use immediately so that we can effectively target our audience for a 360 view, or else we will lose customers to competitors who do.

Selecting a tool:

This process can be simplified significantly with the help of big data integration tools. When choosing a big data tool, you should look for the following features:

Connectors are everywhere:- there are many systems and applications in the world. Your team will be able to save more time if your integration tool has multiple pre-built connectors.

Open-source:- Open-source architectures typically provide greater flexibility, whereas they minimize vendor lock-in; also, many big data technologies are open source, making them easy to implement.

Portable:- In the hybrid cloud era, it is essential that companies be able to build big data integrations once and run them anywhere:- on-premises, hybrid and in the cloud.

Ease of use:- The interface should offer a simple and intuitive way for you to visualize your big data pipelines while learning how to use the tool.

Transparent pricing:- you shouldn't be penalized for adding connectors or data volumes to your big data integration solution.

Cloud compatibility:- Integration tools should be able to run natively in any cloud environment, including multi cloud and hybrid clouds, as well as use serverless computing to minimize the cost of your big data processing and pay only for what you use.

Hadoop consists of four main modules:

The HDFS (Hadoop Distributed File System) is one of the distributed file systems that runs on standard or low-end hardware. Aside from high fault tolerance and native support for large datasets, HDFS provides better data throughput than traditional file systems.

Yet Another Resource Negotiator (YARN):-

Monitors and manages the resources used by cluster nodes. Scheduling jobs and tasks is done by it.

MapReduce:-

Programs can use such a framework to perform parallel computation on data. This task converts data inputs into datasets that can be analyzed as key-value pairs. Reduce tasks consume map output to aggregate output and produce the desired results.

Hadoop Common:-

All modules can access the common Java libraries.

Hadoop consists of what key features?

Hadoop's top 8 features are:

1) Effective Cost Management System:-

The Hadoop framework can be implemented with little or no specialized hardware, making it a cost-effective system. So it does not matter what hardware it is implemented on. Commodity hardware is technical terminology for these components.

2) Nodes in a large cluster:-

It supports a large cluster of nodes. This means a Hadoop cluster can be made up of millions of nodes. The main advantage of this feature is that it offers a huge computing power and a huge storage system to the clients.

3) Parallel Processing:-

It supports parallel processing of data. Therefore, the data can be processed simultaneously across all the nodes in the cluster. This saves a lot of time.

4) Distributed Data:-

Distributing and splitting data across cluster nodes are the responsibilities of Hadoop. Additionally, data is replicated over the entire cluster.

5) Fault management using automatic failover:-

The Hadoop network is designed to replace a machine within a cluster in case of failure. The failed machine's configuration settings and data are also replicated to the new machine. Admins do not need to worry about this feature once it is properly configured on a cluster.

6) Optimizing the locality of data:-

When a program is executed in the traditional way, data is transferred from the data center into the machine where it is being executed. Imagine, for instance, that the data this program uses is housed in a datacenter in the United States but is required in Singapore. The data size required is approximately 1 PB. Such a large amount of data would require a lot of bandwidth and time to transfer from the USA to Singapore. Hadoop solves this problem by moving the small amount of code that it contains. The code is transferred from the Singapore data center to the USA data center. This code is then compiled and executed locally. By doing this, a lot of bandwidth and time can be saved. Hadoop's ability to store large amounts of data is one of its most important features.

7) Cluster of heterogeneous cells:-

Heterogeneous clusters are supported. In addition to being one of the most important features of Hadoop, it is also a key feature. Clusters that are heterogeneous are clusters where nodes are from different vendors. There are many versions and flavours of the operating system available for each of these computers. Think about a cluster with four nodes, for example. First, there is an IBM computer running RHEL (Red Hat Enterprise Linux), the second is an Intel computer running UBUNTU Linux, third is an AMD computer running Fedora Linux, and last is an HP computer running CENTOS Linux.

8) Scalability:-

Cluster management refers to the process of adding or removing nodes, as well as adding or removing hardware components. Cluster operation is not affected or brought down in any way by this procedure. You can also add or remove individual hardware components such as RAM and hard drives.

What is Hadoop and how does it work?

There are two main components of Hadoop: the Hadoop Distributed File System (HDFS) and the MapReduce framework. As a result, each chunk of data is stored separately on a node in the cluster.

Let us suppose we have 4 terabytes of data, and a Hadoop cluster with four nodes. A HDFS partition would split the data into four parts each of 1TB. Consequently, storing data on the disk would take significantly less time. For one part of the data to be stored on the disk, the total time for it to be stored would equal one part of the data. Data will be stored on a variety of machines simultaneously due to this fact. In order to provide high availability, Hadoop can replicate each part of the data onto other machines present in the cluster. The number of copies it can replicate depends on the replication factor. By default, the replication factor is set to three. The default replication factor will result in three copies of each part of the data being stored on three separate machines. There would be two copies of data stored on the same rack at the same time in order to reduce latency and bandwidth. On a different rack would be stored the last copy. Let's say Rack 1 and Rack 2 are on one rack, and Rack 3 and Rack 4 are on the other rack. Therefore, node 1 and node 2 would store the first two copies of part one. Node 3 or Node 4 will store the third copy. The remaining parts of the data are stored in a similar manner. Hadoop networking takes care of the nodes in the cluster to enable communication in order to distribute data. In addition, the ability to process large amounts of data simultaneously reduces the processing time.

The top 10 Hadoop tools for big data:

A list of the top 10 big data analytics tools for Hadoop is listed below.

1. Apache Spark:-

Developed for ease of analytics operations, Apache Spark is an open-source analytics engine. Cluster computing platform that is designed for general-purpose use and is made to be fast. The Spark platform is designed to enable batch processing, machine learning, streaming data processing, and interactive queries.

2. Map Reduce:-

Based on the YARN framework, MapReduce is just like an algorithm or a data structure. When we are dealing with Big Data, serial processing isn't as useful as it used to be since MapReduce can perform the distributed processing in parallel on a Hadoop cluster.

3. Apache Hive:-

Hadoop is a platform for data warehousing, while Data Warehousing is about storing data at a single location that comes from many sources. Data analysis on Hadoop is made easy with Hive, one of the best tools. With SQL knowledge, Apache Hive can be used efficiently. HQL and HIVEQL are the query languages of high.

4. Apache Impala:-

Open-source database engine Apache Impala runs on Hadoop. Impala's processing speed is faster than that of Apache Hive, so it overcomes the speed issue. Using similar SQL syntax, an ODBC module, and a similar user interface to Apache Hive, Apache Impala can be used. For data analytics purposes, Apache Impala can be incorporated with Hadoop easily.

5. Apache Mahout:-

Mahout is derived from the Hindi word Mahavat, which means elephant rider. Hadoop and Mahout work together, so Mahout is named Apache Mahout. Mostly Mahout is used to implement Machine Learning techniques on our Hadoop environment like classification, collaborative filtering, recommendation. The Machine algorithms can be implemented using Apache Mahout without having to integrate with Hadoop.

6. Apache Pig:-

Yahoo originally developed this Pig to make programming easier. Because it is built on top of Hadoop, Apache Pig can handle a large amount of data. Using Apache Pig, larger datasets can be analyzed by transforming them into a dataflow representation. The Apache Pig project also allows enormous datasets to be processed with greater abstraction.

7. HBase:-

The HBase database consists solely of non-relational, NoSQL distributed, and columnar databases. A HBase database contains a number of tables containing multiple rows of data for each table. Multiple column families will be present in these rows, and these column families will contain key-value pairs. The HBase platform is based on HDFS (Hadoop Distributed File System). Whenever we need to search small-sized data from more massive datasets, we use HBase.

8. Apache Sqoop:-

A command-line tool developed by Apache, sqoop, is a command-line application. In order to utilize HDFS(Hadoop Distributed File System), Apache Sqoop is primarily used to import structured data from RDBMS (Relational database management systems) like MySQL, SQL Server and Oracle. Our HDFS data can also be exported to RDBMS using Sqoop.

9. Tableau:-

TIBCO Tableau is a software program for data visualization and business intelligence. Besides providing a variety of interactive visualizations to illustrate the insights of the data, it can also translate queries into visualizations and import any range and size of data.

10. Apache Storm:-

It is built in Java and Clojure programming languages and is a free and open source distributed computing platform. It is compatible with a wide range of programming languages. It is faster to use Apache Storm for stream processing. Nimbus, Zookeeper, and Supervisor are some of the daemons available in Apache Storm. In addition to real-time processing and online Machine Learning, Apache Storm can also be used for many other tasks. There are so many companies using Apache Storm, including Yahoo, Spotify, and Twitter.

Big data: 5 major advantages of Hadoop:

1. Scalable:-

The Hadoop storage platform is capable of storing and distributing very large data sets through a network of inexpensive, parallel servers. Hadoop enables businesses to run applications over thousands of nodes that involve thousands of terabytes of data, unlike traditional relational database management systems (RDBMS) that can't handle large amounts of data.

2. Cost effective:-

Businesses' exploding data sets can also be stored cost effectively with Hadoop. As a result, traditional relational database management systems are extremely expensive to scale to a degree that will allow them to handle such high volumes of data. Many companies in the past had to reduce costs by down-sampling data and classifying it according to certain assumptions about which data was important. We would delete raw data, as storing them would be excessively expensive.

As a result of this approach, when the business priorities changed, the complete raw data set could not be accessed because storing it was too expensive. In contrast, Hadoop applies a scale-out architecture to store all the data of a company for use at a later time. Hadoop allows for computing and storage to be done for only a few hundred pounds per terabyte instead of tens of thousands of pounds.

3. Flexible:-

In the context of businesses, Hadoop enables quick and easy access to new data sources and the use of a variety of types of data (structured and unstructured) to generate value from this data. With Hadoop, businesses can get insight into data sources such as social media, email conversations, and clickstreams. As well as being used for log processing, recommendation systems, data warehousing, market campaign analysis, and fraud detection, Hadoop can also be used for a variety of other uses.

4. Fast:-

Data is stored in Hadoop's distributed filesystem by means of a uniform map of data, located wherever the cluster's nodes are. The tools for data processing are often located on the same servers where the data is kept, resulting in faster data storage and processing. Hadoop can process terabytes of unstructured data in minutes, and petabytes in hours, if you are dealing with large volumes of unstructured data.

5. Resilient to failure:-

Using Hadoop has the advantage of being fault tolerant. In addition to data being sent to an individual node, every node in the cluster receives a copy so that in the event of a failure, another copy can be accessed.

A distributed NoNameNode architecture is more reliable, and MapR goes beyond that by eliminating the NameNode. A single or multiple failure is protected by our architecture.

How does the Big Data Hadoop certification help in jobs?

The job market is competitive today as there are only a limited number of openings available. It is likely you will not receive the job you want if you don't possess any specialization. The use of Hadoop for big data processing across various industries will lead to a growing demand for Big Data Hadoop professionals. Certification proves to recruiters that you have the Big Data Hadoop skills they're looking for. Top employers receive hundreds of thousands of resumes for a handful of job openings every week, so a Hadoop certification can set you apart. The average salary of a Certified Hadoop Administrator is 123,000. You can advance your career with Big data Hadoop certifications.

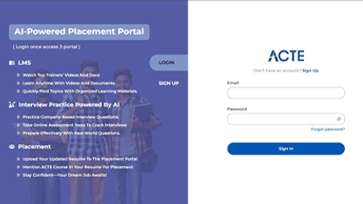

LMS

LMS