In our Big Data Hadoop Course, we cover the many tools and frameworks in the Hadoop Cluster and how to utilise them effectively. You will be taught by industry professionals how to identify, analyse, and resolve problems in the framework. Achieve certification as an expert in Big Data Hadoop testing. Experienced working experts offer our Big Data Training in Bhubaneswar. In addition to personal skill development, CV building, and updating the newest trends and employment possibilities in India, we give 100 percent placement promise.

Additional Info

Why Is Big Data Important?

The value of big data does not lie in how much your organization has, but rather what it does with that data. Using any data source, you can get answers that can help 1) lower costs, 2) reduce time spent on tasks, 3) develop new products and optimize offerings, and 4) make smarter decisions. Business-related tasks can be accomplished with the use of big data and high-powered analytics, including:

In near-real time, determine root causes of failures, issue, and defect.

Coupons generated at point of sale based on the purchasing habits of customers.

Quickly recalculate your entire risk portfolio.

Preventing fraud from affecting your organization by detecting it early.

Challenges of Big Data:

The initial stages of Big Data projects can be very challenging for companies. They do not understand the challenges faced by Big Data nor are they equipped to deal with them.

1. Inadequate understanding of Big Data:-

Due to insufficient understanding of Big Data, companies fail to adopt them. There may be a lack of understanding by employees about data, its storage, processing, importance, and source. Others may not be aware of what's going on, even if data professionals do.

2. Growth issues in data:-

Storing all these huge sets of data properly is one of the biggest challenges of Big Data. It is becoming increasingly common for companies to store data in their servers and databases. It becomes extremely difficult to deal with these large data sets as they grow exponentially over time. Documents, videos, audios, text files, and other types of data are the most typical sources of unstructured data. Databases do not contain them, so you are unable to locate them. Different storage tiers can be used by companies for data tiering. In doing so, the data will be stored in a more suitable location. Depending on the data size and significance, public cloud, private cloud, or flash storage can be used. Additionally, companies are embracing Big Data technologies, including Hadoop, NoSQL, and others.

3. Big Data tool selection can be confusing:-

Many companies have trouble choosing the right tool for analyzing and storing Big Data. What is the best technology for storing data? HBase or Cassandra? Data analytics and storage can be achieved with Hadoop MapReduce or Spark is a better choice?

There are questions like these that bother companies, and sometimes the answers are difficult to find. As a result, they choose an inappropriate technology and make poor decisions. The result is a waste of time, money, efforts, and work hours.

4. Lack of data professionals:-

A company's data professionals are essential to operate these modern technologies and Big Data tools. Professionals who specialize in working with big data sets will include data scientists, data analysts, and data engineers.

Despite the huge demand for Big Data professionals, companies face a shortage. Most data handling professionals, on the other hand, have not changed with the tools. This gap must be closed by taking actionable steps.

5. Securing data:-

Big Data poses a number of daunting challenges, including the need to secure these massive sets of data. Data security is often pushed to the back burner when companies are so busy analyzing, storing, and understanding their data sets. It would be a good idea to protect data repositories, as they can serve as breeding grounds for malicious hackers.

6. The integration of data from various sources:-

An organization collects data through various channels, such as social media, ERP applications, financial reports, e-mails, presentations and the reports created by its employees. Reports are difficult to prepare when all these data have to be combined.

The area is often neglected by firms. Data integration, however, is essential for analysis, reporting, and business intelligence, so it needs to be perfect.

What are the challenges of using Hadoop?

Not all problems are well-suited to MapReduce programming. For iterative and interactive analytical tasks, it is best suited for simple requests for information and problems that can be divided into independent units. The MapReduce algorithm requires a lot of files. Consequently, iterative algorithms must go through multiple phases of map-shuffle/sort-reduce since nodes cannot communicate except through sorts and shuffles. For analytic computing, this leads to multiple files created between MapReduce phases. It's widely acknowledged that there is a talent gap. There is a problem with finding entry-level programmers with Java skills who can be productive with MapReduce. Among the reasons distribution companies are rushing to integrate relational (SQL) technology with Hadoop is this. The availability of SQL programmers is much greater than that of MapReduce programmers. Moreover, Hadoop administration appears to be an art and a science that requires basic understanding of operating systems, hardware and Hadoop kernel settings.

Data security:-

New tools and technologies have been developed to address the fragmented data security issues. Hadoop environments can be made more secure with Kerberos authentication. Management and governance of data on a full scale. For data management, data cleaning, governance and metadata, Hadoop does not provide easy-to-use, full-featured tools.

Major features of Hadoop:

1. Scalable:-

Because hadoop stores and distributes very large data sets across thousands of inexpensive servers, it is highly scalable. It doesn't scale to support large amounts of data, as do traditional relational databases (RDBMS).

2. Cost-effective:-

Businesses with exploding data sets can also use Hadoop to store data more efficiently. Due to the complexity of traditional relational databases, scaling to an extent that allows massive amounts of data to be processed is extremely costly. Many companies in the past would have down-sampled data and classified it based on what they believed was most valuable to cut costs. We would delete the raw data, because storing it would be unprofitable.

3. Flexible:-

In order to generate value from data, businesses can tap into diverse types of data (structured and unstructured) using Hadoop. As a result, businesses can gain valuable insights from social media, email conversations, and other data sources using Hadoop.

4. Fast:-

Rather than store data in a specific location on a cluster, Hadoop's storage method uses a distributed file system that map data to any location on the cluster. Because the tools for analyzing the data reside on the same servers as the data, the data is processed much faster.

5. Resilient to failure:-

Hadoop offers fault tolerance as one of its main advantages. In addition to being replicated to all other nodes in the cluster, when data is sent to one node in a cluster, there is also another copy that can be used in the event of a failure.

Advantages of Big Data:

Consumers today have high expectations. On social media, he interacts with potential customers and considers different options before making a purchase. Upon purchasing a product, customers want to be treated as individuals and thanked. Your company can use big data to get actionable data in real-time that enables direct engagement with your customers. A way big data can help you do this is that you can check the profile of a complaining customer in real-time and get information about the product/s he/she is complaining about. Afterwards, reputation management can be done. Using big data, you can redevelop the products and services you sell. You can use unstructured text from social networking sites, for example, to find out what other people think about your products. The success of your business can be measured by studying how minor variations in CAD (computer-aided design) images affect your processes and products. As a result, manufacturing processes can benefit greatly from big data. Keep your competitors at bay with predictive analysis. Big data can be useful for this by, for example, scanning and analyzing social media feeds and newspaper articles. Furthermore, big data assists in running health-tests on your customers, suppliers, and other stakeholders in order to help you avoid defaults.

In terms of data security, big data is beneficial. The use of big data tools helps you map the data landscape of your organization, which helps in analyzing threats within. By comparing the protected information with the unsecured information, you will know if it is protected. In addition, you will be able to identify the emailing or storing of 16 digit numbers (which may contain credit card information). Diversifying revenue streams is possible with big data. You can use big data analytics to find trends that can help you come up with a completely new source of revenue. It is imperative that your website be dynamic if you wish to succeed in the crowded online market. By studying big data, you can customize your website to suit the needs of each visitor by taking into account their gender and nationality, for example. Amazon's IBCF feature (item-based collaborative filtering) drives the "People you may know" and "Frequently bought together" sections.The advantages of big data for factories are that machines will not need to be replaced based on how long they have been used. Compared to maintaining the same wear rate on the same parts, this is rather expensive and impractical. It is vital for healthcare to use big data, which creates a more personalized and effective experience.

Career Path in Role of Big Data:

Data Analyst:-

As a data analyst, you are responsible for processing big data using various tools. Data analysts often work with unstructured or semi-structured sources of data, and to process these sources, they use tools like hive, pig, and NoSQL databases, as well as frameworks such as Hadoop and spark. By using the hidden data potential of data and making wise decisions, their main job is to help companies increase their revenue. It is essential that someone with good arithmetic and problem-solving skills become a data analyst. Analysts generate reports, analyze past trends, and produce analysis of past data.

Programmer:-

Programmers create codes for repetitive and conditional actions on available data sets. An individual ought to possess good analytical, mathematical, logical, and statistical skills in order to write good and efficient code. Programmers working with big data mostly use Shell scripts, Java, Python, R, and so on. A programmer contends with flat files or databases as the data with which they work is typically stored, so an understanding of file systems and databases is also critical.

Admin:-

In the big data ecosystem, an admin is responsible for managing the infrastructure that manages data and analytics-related tools. Also included in their role is the management of all nodes and the configuration of their networks. For big data operations, administrators make sure that infrastructure is always available. Admins handle the installation of various tools and manage the hardware of clusters. One should have a thorough understanding of the file system, operating system, hardware, and networking in order to be an admin.

Solution Architect:-

Solution architects study real-world problems and develop proper strategies to solve them using their expertise and the capabilities of big data. Choosing which software/programming language should be used to develop the solution is the responsibility of the solution architect. For the position of Solution Architect, a person must have solid problem-solving skills along with knowledge of the frameworks and tools that are available to process big data, their licensing costs, and alternative open source solutions.

Software Developer(Programmer):-

Developing Hadoop data abstraction SDKs and extracting value from data is the job of a Hadoop Data Developer.

Data Analyst:-

Having a good understanding of SQL makes working with Hadoop's SQL engines such as Hive or Impala an exciting prospect

Business Analyst:-

Organizations looking to become more profitable are utilizing massive amounts of data, and a business analyst's role is crucial in achieving this.

ETL Developer:-

Using Spark tools for ETL can be a relatively easy transition if you're working with traditional ETL tools.

Testers:-

In the Hadoop world, testing is in high demand. The transition to this role is possible for testers who understand Hadoop and data profiling.

BI/DW professions:-

Hadoop Data architecture can easily be adapted to data modelling.

Senior IT professionals:-

The right senior professional can become a consultant by gaining insight how Hadoop tries to solve these data challenges with deep domain knowledge and familiarity with existing challenges.It's possible to find generic roles, such as Data Engineers or Big Data Engineers, that are responsible for implementing solutions mostly on cloud platforms. It will prove to be a rewarding role as you gain experience with the cloud's data components.

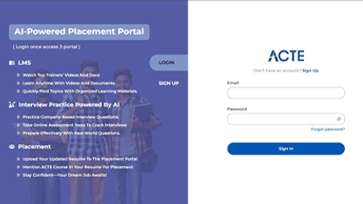

LMS

LMS