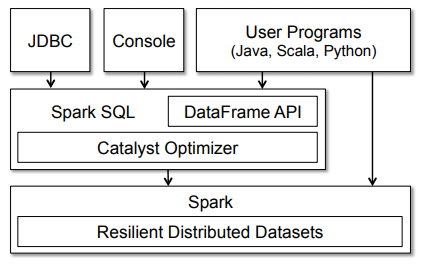

Spark SQL is one of the main components of the Apache Spark framework. It is mainly used for structured data processing. It provides various Application Programming Interfaces (APIs) in Python, Java, Scala, and R. Spark SQL integrates relational data processing with the functional programming API of Spark.

Spark SQL provides a programming abstraction called DataFrame and can also act as a distributed query engine (querying on different nodes of a cluster). It supports querying either with Hive Query Language (HiveQL) or with SQL.

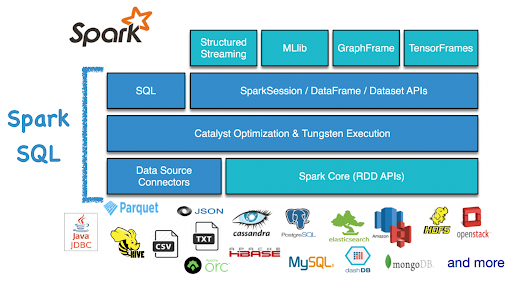

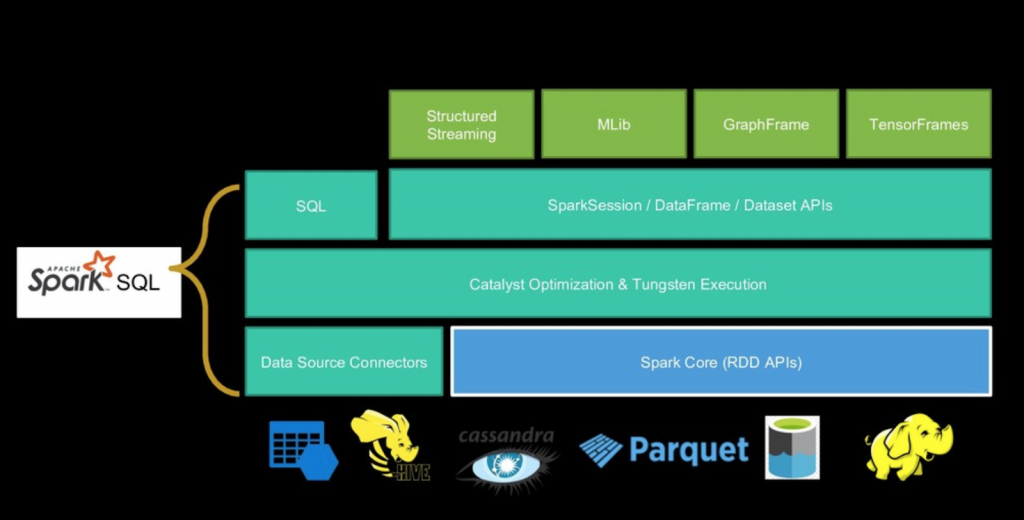

The following illustration explains the architecture of Spark SQL −

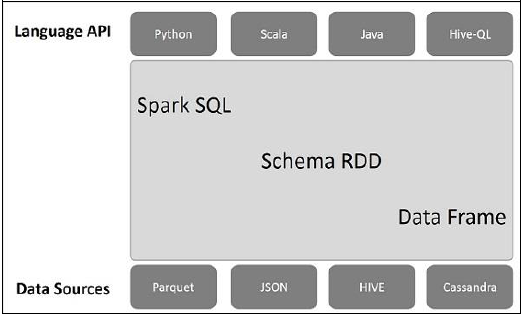

This architecture contains three layers namely, Language API, Schema RDD, and Data Sources.

- Language API: Spark is compatible with different languages and Spark SQL. It is also, supported by these languages- API (python, scala, java, HiveQL).

- Schema RDD : Spark Core is designed with special data structure called RDD. Generally, Spark SQL works on schemas, tables, and records. Therefore, we can use the Schema RDD as temporary table. We can call this Schema RDD as Data Frame.

- Data Sources: Usually the Data source for spark-core is a text file, Avro file, etc. However, the Data Sources for Spark SQL is different. Those are Parquet file, JSON document, HIVE tables, and Cassandra database.

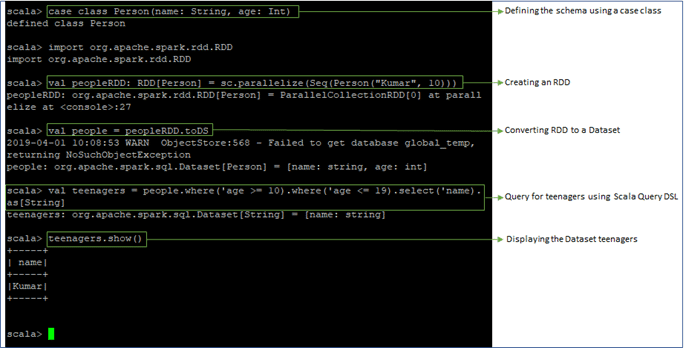

- In Spark SQL, Datasets comprise distributed computation that is converted into a sequence of RDDs called DAG (Directed Acyclic Graph). Here is a simple example for querying using Spark SQL

.

If you have any query related to Spark and Hadoop, kindly refer to our Big Data Hadoop and Spark Community!

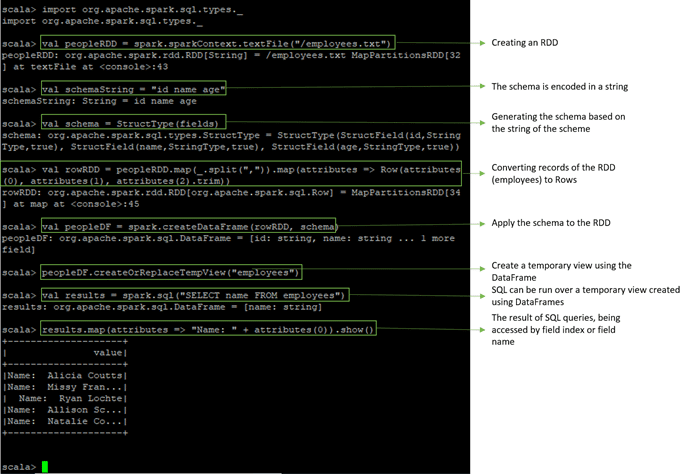

Adding a Schema to RDDs Programmatically- We can add a schema to an existing RDD programmatically. It can be done by importing the respective API, applying transformations, etc.

- In the below example, a text file is being stored into an RDD. And then, we are adding a schema to that RDD.

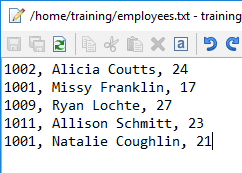

The file employees.txt has the following data:

Prepare yourself for top jobs by learning from these Apache Spark Interview Questions and Answers!

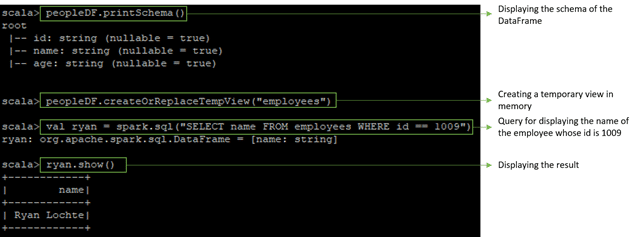

Caching Tables In-memory- Here, we are creating a temporary view in memory for the above text file. Temporary views can be accessed faster as they are stored in memory. Since queries are performed on a cached table, we will get the desired results in no time.

Caching is mainly done for faster execution.

- There were some limitations with RDDs. When working with structured data, there was no inbuilt optimization engine. On the basis of attributes, the developer optimized each RDD. Also, there was no provision to handle structured data. The DataFrame in Spark SQL overcomes these limitations of RDD. Spark DataFrame is Spark 1.3 release. It is a distributed collection of data ordered into named columns. Concept wise it is equal to the table in a relational database or a data frame in R/Python. We can create DataFrame using:

- Structured data files

- Tables in Hive

- External databases

- Using existing RDD

- Spark Dataset is an interface added in version Spark 1.6. it is a distributed collection of data. Dataset provides the benefits of RDDs along with the benefits of Apache Spark SQL’s optimized execution engine. Here an encoder is a concept that does conversion between JVM objects and tabular representation.

- A Dataset can be made using JVM objects and after that, it can be manipulated using functional transformations (map, filter etc.). The Dataset API is accessible in Scala and Java. Dataset API is not supported by Python. But because of the dynamic nature of Python, many benefits of Dataset API are available. The same is the case with R. Using a Dataset of rows we represent DataFrame in Scala and Java. Follow this comparison guide to learn the comparison between Java vs Scala.

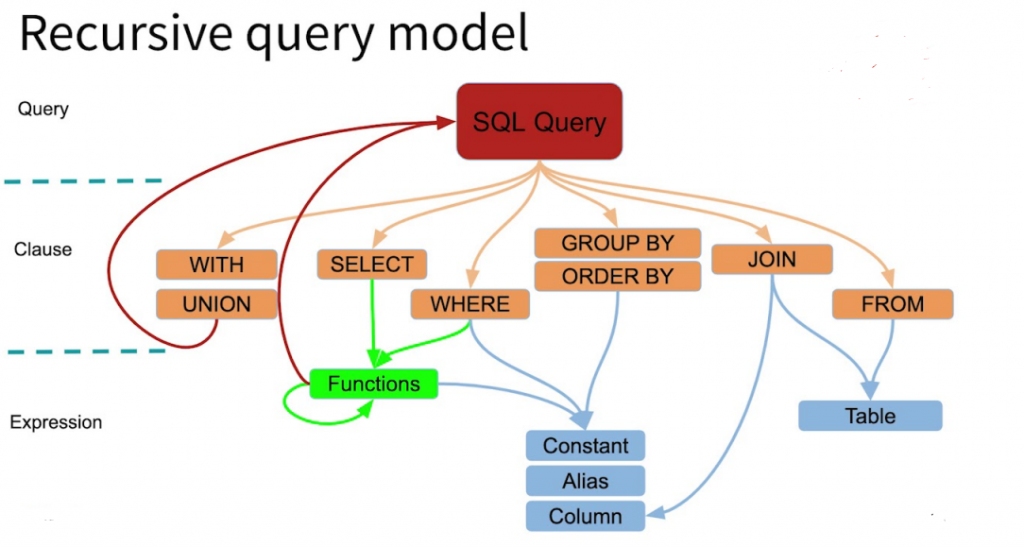

- The optimizer used by Spark SQL is Catalyst optimizer. It optimizes all the queries written in Spark SQL and DataFrame DSL. The optimizer helps us to run queries much faster than their counter RDD part. This increases the performance of the system.

- Spark Catalyst is a library built as a rule-based system. And each rule focusses on the specific optimization. For example, ConstantFolding focus on eliminating constant expression from the query.

- It executes SQL queries.

- We can read data from existing Hive installation using SparkSQL.

- When we run SQL within another programming language we will get the result as Dataset/DataFrame.

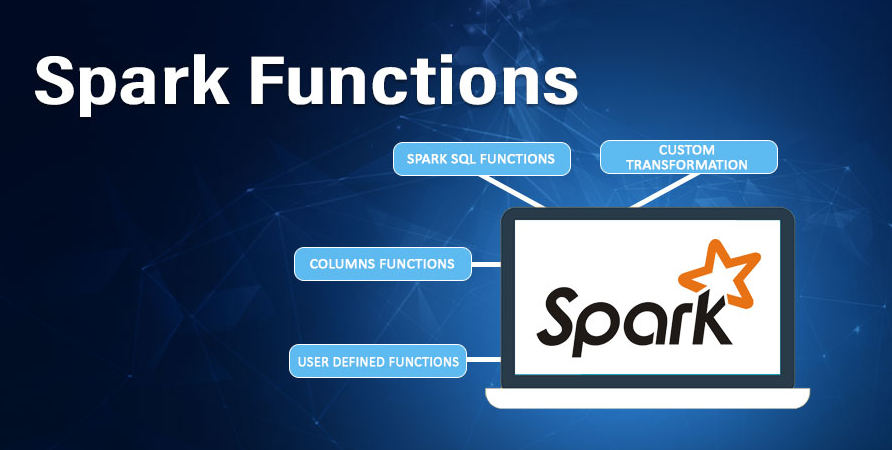

Built-In function : It offers a built-in function to process the column value. We can access the inbuilt function by importing the following command: Import org.apache.spark.sql.functions

User Defined Functions(UDFs) : UDF allows you to create the user define functions based on the user-defined functions in Scala. Refer this guide to learn the features of Scala.

Aggregate functions : These operate on a group of rows and calculate a single return value per group.

Windowed Aggregates(Windows) : These operate on a group of rows and calculate a single return value for each row in a group.

Advantages of Spark SQL

Integrated : Apache Spark SQL mixes SQL queries with Spark programs. With the help of Spark SQL, we can query structured data as a distributed dataset (RDD). We can run SQL queries alongside complex analytic algorithms using tight integration property of Spark SQL.

Unified Data Access : Using Spark SQL, we can load and query data from different sources. The Schema-RDDs lets single interface to productively work structured data. For example, Apache Hive tables, parquet files, and JSON files.

High compatibility : In Apache Spark SQL, we can run unmodified Hive queries on existing warehouses. It allows full compatibility with existing Hive data, queries and UDFs, by using the Hive fronted and MetaStore.

Standard Connectivity : It can connect through JDBC or ODBC. It includes server mode with industry standard JDBC and ODBC connectivity.

Scalability : To support mid-query fault tolerance and large jobs, it takes advantage of RDD model. It uses the same engine for interactive and long queries.

Performance Optimization : The query optimization engine in Spark SQL converts each SQL query to a logical plan. Further, it converts to many physical execution plans. Among the entire plan, it selects the most optimal physical plan for execution. Read more about Apache Spark performance tuning techniques in detail.

For batch processing of Hive tables : We can make use of Spark SQL for fast batch processing of Hive tables.

ConclusionIn conclusion to Spark SQL, it is a module of Apache Spark that analyses the structured data. It provides Scalability, it ensures high compatibility of the system. It has standard connectivity through JDBC or ODBC. Thus, it provides the most natural way to express the Structured Data.