Impala is the open source, native analytic database for Apache Hadoop. It is shipped by vendors such as Cloudera, MapR, Oracle, and Amazon. The examples provided in this tutorial have been developing using Cloudera Impala

Apache Impala

- Apache Impala is a massively parallel processing query engine that executes on Hadoop platform. It is an open source software, which was developed on the basis of Google’s Dremel paper.

- It is an interactive SQL like query engine that runs on top of Hadoop Distributed File System (HDFS). Moreover, Impala is used as a scalable parallel database technology provided to Hadoop, which enables the users to issue low-latency SQL queries to the data stored in HDFS and Apache HBase without requiring data movement or transformation.

- Impala integrates with the Apache Hive metastore database to share database table information between both the components. Analysts and Data Scientists perform analytical operations on data stored in Hadoop and advertises it via SQL tools. It helps to provide large-scale data processing (via MapReduce), and interactive queries. Furthermore, it can be processed on the same system using the same data and metadata, which helps to eliminate the need to shift data sets into specialized systems.

Apache Impala installation

General build requirements

- sudo apt-get install build-essential git maven

- sudo apt-get install openjdk-8-jdk

- sudo apt-get install libpython-dev cmake

- sudo apt-get install libssl-dev libsasl2-dev

Build the test environment

Install Postgres

- sudo apt-get install postgresql

- sudo service postgresql start

Edited pg_hba.conf to change “peer” to “trust”

- vim /etc/postgresql/*/main/pg_hba.conf

- Go to end of file (shift+G) Replace peer, ident, md5 by trust in last 4 lines

- sudo -u postgres psql postgres

- CREATE ROLE hiveuser LOGIN PASSWORD ‘password’;

- ALTER ROLE hiveuser WITH CREATEDB;

Setup pass wordless ssh for hbase

- sudo apt-get install openssh-server

- sudo service ssh start

- ssh-keygen -t dsa

Do not type in any passkey. Just press enter.

- cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

Setup ntpd for Kudu

- sudo apt-get install ntp

- sudo systemctl restart ntp.service

Add a path for HDFS domain sockets

- sudo mkdir /var/lib/hadoop-hdfs/

Build native toolchain

- sudo apt-get install build-essential git

- sudo apt-get install bison

- sudo apt-get install autoconf automake libtool

- sudo apt-get install libz-dev

- sudo apt-get install libssl-dev

- sudo apt-get install libncurses-dev

- sudo apt-get install libsasl2-dev libkrb5-dev

Checkout and build the toolchain

- git clone https://github.com/cloudera/native-toolchain.git

- cd native-toolchain

- ./buildall.sh

Clone Impala

- cd /etc

- git clone https://git-wip-us.apache.org/repos/asf/incubator-impala.git Impala

- cd Impala

Export variables

- export JAVA_HOME=/usr/lib/jvm/java-7-oracle

- export LD_LIBRARY_PATH=/usr/lib/x86_64-linux-gnu

- export LC_ALL=”en_US.UTF-8″

- export M2_HOME=/usr/share/maven

- export M2=$M2_HOME/bin

- export PATH=$M2:$PATH

- export IMPALA_HOME=/etc/Impala/

- export BOOST_LIBRARYDIR=/usr/lib/x86_64-linux-gnu

Using custom tool chain with Impala

- cd ${IMPALA_HOME}

- (mkdir -p toolchain && cd toolchain && ln -s ${NATIVE_TOOL CHAIN_HOME}/build/* .)

- export SKIP_TOOLCHAIN_BOOTSTRAP=true

- export IMPALA_TOOLCHAIN=${IMPALA_HOME}/toolchain

Build the packages

- ./buildall.sh -noclean -notests -skiptests

Building local mini cluster(first time only)

- ${IMPALA_HOME}/buildall.sh -noclean -skiptests -build_shared_libs -format

Start services of cluster

- source ${IMPALA_HOME}/bin/impala-config.sh

- ${IMPALA_HOME}/bin/start-impala-cluster.p

Verify installation

source ${IMPALA_HOME}/bin/impala-config.sh # If you didn’t already source impala-config.sh in this shell

- impala-shell.sh -q “SELECT version()”

Starting Impala Shell without Kerberos authentication

Connected to localhost:21000

Server version: impalad version 2.2.0-INTERNAL DEBUG (build 47c90e004aecb928a37b926080098d30b96b4330)

Query: select version()

- version()

- impalad version 2.2.0-INTERNAL DEBUG (build 47c90e004aecb928a37b926080098d30b96b4330)

- Built on Sun, Mar 22 15:22:57 PDT 2015

- Fetched 1 row(s) in 0.05s

- To start Impala, open the terminal and execute the following command.

- [cloudera@quickstart ~] $ impala-shell

- This will start the Impala Shell, displaying the following message.

Starting Impala Shell without Kerberos authentication

Connected to quickstart.cloudera:21000

Server version:

- impalad version 2.3.0-cdh5.5.0 RELEASE (build0c891d79 aa 38f297d244855 a32f1e17280e2129b)

Welcome to the Impala shell. Copyright (c) 2015 Cloudera, Inc. All rights reserved.

- (Impala Shell v2.3.0-cdh5.5.0 (0c891d7) built on Mon Nov 9 12:18:12 PST 2015)

Press TAB twice to see a list of available commands.

- [quickstart.cloudera:21000] >

Note: We will discuss all the impala-shell commands in later chapters.

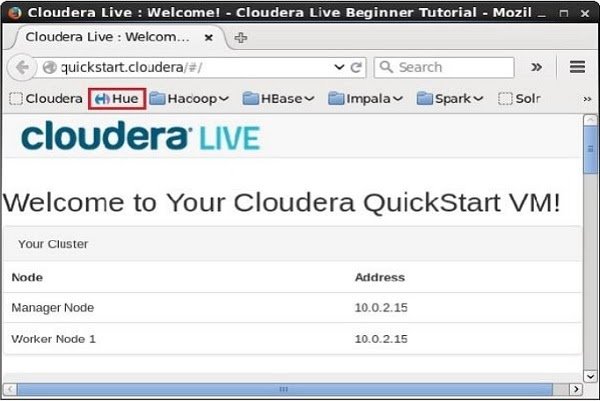

Impala Query editorIn addition to Impala shell, you can communicate with Impala using the Hue browser. After installing CDH5 and starting Impala, if you open your browser, you will get the cloudera homepage as shown below.

Now, click the bookmark Hue to open the Hue browser. On clicking, you can see the login page of the Hue Browser, logging with the credentials cloudera and cloudera.

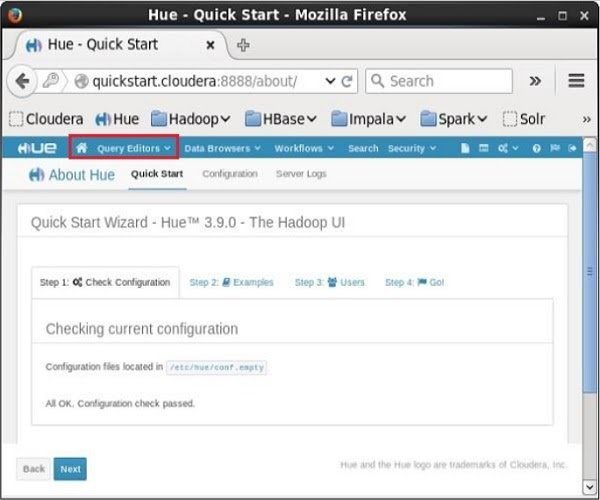

As soon as you log on to the Hue browser, you can see the Quick Start Wizard of Hue browser as shown below.

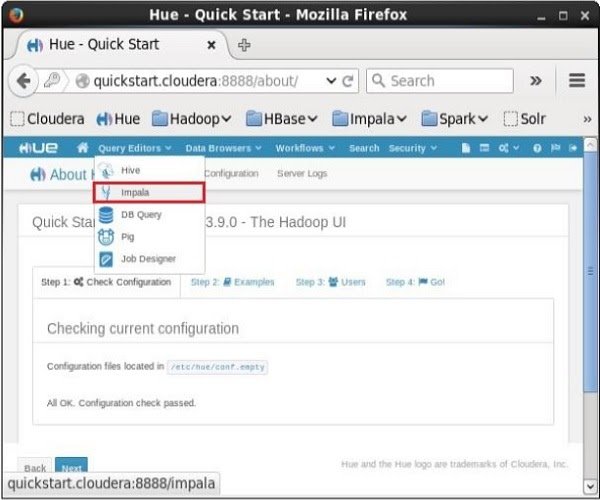

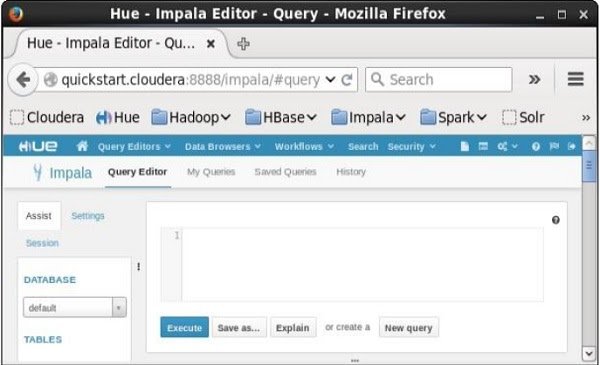

On clicking the Query Editors drop-down menu, you will get the list of editors Impala supports as shown in the following screenshot.

On clicking Impala in the drop-down menu, you will get the Impala query editor as shown below.

Impala Features

Impala provides support for:

1. Impala offers support for most common SQL-92 features of Hive Query Language (HiveQL). It includes SELECT, joins, and aggregate functions.

2. Moreover, it also provides support for HDFS, HBase, and Amazon Simple Storage System (S3) storage. It includes:

- HDFS file formats: delimited text files, Parquet, Avro, SequenceFile, and RCFile.

- Compression codecs: Snappy, GZIP, Deflate, BZIP.

3. Also, supports common data access interfaces. Includes:

- JDBC driver.

- ODBC driver.

4. However, it supports Hue Beeswax and the Impala Query UI.

5. Also, supports impala-shell command-line interface.

6. Moreover, supports Kerberos authentication.

Use Impala- Impala provides parallel processing database technology on top of Hadoop eco-system. So, it can smoothly perform low latency queries interactively.

- Impala is a time-saving job which gives results in seconds whereas, in Hive MapReduce, it takes time in launching and processing queries.

- Impala is also beneficial for Analytics and Data Scientists to perform analytics on data stored in Hadoop File System with the help of real-time query engine.

- Because of providing real-time results, it works perfectly for reporting tools or visualization tools like Pentaho.

- Impala provides in-built support of processing all of the Hadoop supported file formats (ORC, Parquet.etc.). This project provides high-performance, low-latency SQL queries on data stored in popular Apache Hadoop file formats.

There are several advantages of Impala which are given as follows:-

- Fast Speed: We can process data in HDFS at very fast speed by using Impala.

- Migrating data is not necessary: We don’t need to transform and move data store on Hadoop even if the data processing is carried where the data resides.

- Big Data: A user can easily store and manage a large amount of data.

- Languages: Impala does not have issue respect to language support, because it supports all languages.

- High Performance: It offers high performance and low latency task for Hadoop.

- Distributed: It provides a distributive environment in which a query is distributed among different clusters for reducing workload and provides convenient scalability.

- Easy Access: We can easily access the data that is stored in HDFS, HBase, and Amazon s3 without requiring the knowledge of Java.

- Hence, in this Impala Tutorial for beginners, we have seen the complete lesson to Impala. Still, if any query occurs in Impala tutorial, feel free to ask in the comment section.

LMS

LMS