Rated #1 Recoginized as the No.1 Institute for Big Data and Hadoop Training in Indira Nagar

Participate in the Big Data and Hadoop Training in Indira Nagar to deepen your knowledge of the Hadoop framework, data processing, and big data analytics. Gain hands-on experience with large-scale data systems.

In addition to hands-on experience, our Big Data and Hadoop Course in Indira Nagar covers key Big Data platforms and technologies like Hadoop, MapReduce, Hive, Pig, and HBase in detail. Learn how to design, build, and deploy scalable solutions that can process big datasets and extract valuable information.

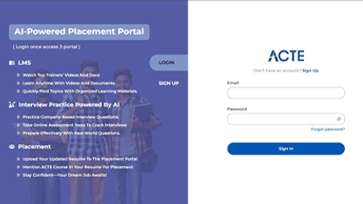

- Get unrestricted access to interviews with more than 400 leading firms.

- Take advantage of an affordable, industry-focused program for today’s workforce.

- To obtain useful practical experience, take part in interactive lectures and projects.

- To launch your career take advantage of Big Data and Hadoop placement support.

- Learn the fundamental Hadoop and Big Data certification that top companies utilizes.

- In the quickly expanding Big Data and analytics sector, you can advance your career.

LMS

LMS