- Introduction

- Search Engine

- How treats Search Engine Do?

- How Do Search Engines Work?

- What is Search engine crawling?

- Search engine tool positioning

- Search Engine Components

- How accomplish search engine tools work

- Search Engine Working

- Architecture

- Search engine tool Processing

- The Scope of Search engine Optimization

- Rundown of Top 10 Most Popular Search engines

- Advantages of Search engine

- Disadvantage of Search engine

- Certification

- Conclusion

- Search engine tool alludes to a colossal data set of web assets, for example, pages, newsgroups, programs, pictures, and so forth It assists with finding data on World Wide Web.

- The client can look for any data bypassing questions in a type of catchphrases or expressions. It then, at that point, looks for significant data in its data set and returns it to the client.

- Have you at any point thought about how often each day you use Google or some other web crawler to look through the web? Is it multiple times, multiple times, or even at times more? Did you have at least some ideas that Google alone handles multiple trillion ventures each year?

- The numbers are immense. Web crawlers have become a piece of our day-to-day existence. We use them as a learning apparatus, a shopping instrument, for no particular reason and relaxation yet in addition for business.

- Web indexes are mind-boggling PC programs.Before they even permit you to type an inquiry and search the web, they need to do a great deal of readiness work so when you click “Search”, you are given a bunch of exact and quality outcomes that answer your inquiry or question.

- How does treats ‘work’ incorporate? Three primary stages. The principal stage is the method involved with finding the data, the subsequent stage is arranging the data, and the third stage is positioning.This is for the most part referred to in the Internet World as Crawling, Indexing , and positioning.

- Crawling is the revelation interaction where web indexes convey a group of robots (known as crawlers or bugs) to view as a new and refreshed substance. Content can shift – it very well may be a page, a picture, a video, a PDF, and so forth – yet no matter what the arrangement, content is found by joins.

- Googlebot begins by getting a couple of website pages, and afterward follows the connections on those site pages to track down new URLs. By jumping along this way of connections, the crawler can see a new substance and add it to their list called Caffeine – a gigantic data set of found URLs – to later be recovered when a searcher is looking for data that the substance on that URL is a decent counterpart for.

- Search enginefile :Web crawlers interact and store data they find in a record, a colossal data set of all the substances they’ve found and consider adequate to serve up to searchers.

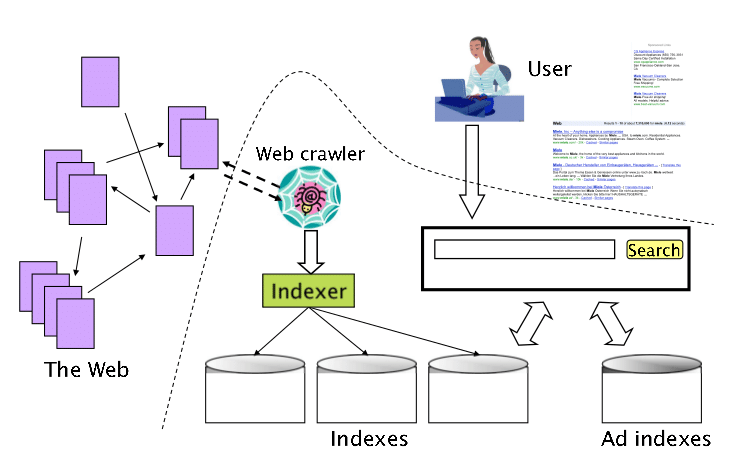

- Web Crawler

- Database

- Search Interfaces

- Included Pages

- Excluded Pages

- Document Types

- Frequency of Crawling

- Size of the data set

- The newness of the data set

- Operators

- Phrase Searching

- Truncation

- Location and recurrence

- Link Analysis

- Clickthrough estimation

- The Search engine tool searches for the watchword in the file for a predefined information base as opposed to going straightforwardly to the web to look for the catchphrase.

- It then, at that point, utilizes programming to look for the data in the information base. This product part is known as a web crawler.

- When the web crawler observes the pages, the Search engine tool then, at that point, shows the applicable site pages thus. These recovered site pages for the most part incorporate the title of page, size of message segment, initial a few sentences, and so on

- These inquiry standards might fluctuate from one Search engine tool to the next. The recovered data is positioned by different factors, for example, recurrence of catchphrases, the significance of data, joins, and so on. Client can tap on any of the indexed lists to open it.

- Content assortment and refinement.

- Search center

- Client and application interfaces

- Text obtaining

- Text change

- Index creation

- Sort of content: Assuming that the client is requesting pictures, the returned outcomes will contain pictures and not text.

- Nature of the substance: Content should be exhaustive, valuable and useful, impartial, and cover the two destinations of a story.

- Nature of the site: The general nature of a site matters. Google won’t show pages from sites that don’t satisfy their quality guidelines.

- Date of distribution: For news-related inquiries, Google needs to show the most recent outcomes so the date of distribution is likewise considered.

- The prominence of a page: This doesn’t have to do with how much traffic a site has yet the way that different sites see the specific page.

- Language of the page: Users are served pages in their language and it’s not English all the time.

- Site page Speed: Websites that heap quick (think 2-3 seconds) enjoy a little benefit contrasted with sites that are delayed to stack.

- Gadget Type: Users looking on versatile are served dynamic pages.

- Area: Users looking for brings about their area for example “Italian cafés in Ohio” will be shown outcomes connected with their area.

- Large numbers of us have this misinterpretation that website streamlining (SEO) has no or extremely less degree. I think SEO has an incredible breadth. With the headway in innovation, other new most recent methods are being utilized for the site yet that does,t imply that SEO is done. Search engine optimization is the subset of Digital Marketing that incorporates different terms like substance showcasing, online media promoting, email promoting, paid publicizing, and numerous others.

- Web optimization has its significance and job. On-page SEO and Off-page SEO are the critical terms for the site improvement which helps in expanding the site traffic as well as helps in working on the positioning of the site on various Search engine tools.

- There is an excellent extension in the SEO industry. Website optimization won’t ever go dead in the future the course of SEO will change by Google’s updates and we need to simply follow them and accomplish quality base work. Web optimization is an extremely long interaction and for each business, SEO assumes a significant part to expand the accessibility of your business on the Search engine tool.

- Accomplishing high rankings increment site traffic as well as further develop brand consciousness of the item or administration over the long haul. It makes searchers partner their inclinations (as communicated through the catchphrase phrases they use) with your image. This assists your association with building a view of dependability and ability.

- Eliminate the need to find data physically.

- Perform search tasks at an extremely high velocity.

- The Search engine tool offers a different assortment of assets to get applicable and important data from the Internet.

- By utilizing a web crawler, we can get data in different fields like instruction, amusement, games, and so forth.

- The data which we get from the web crawler is as online journals, pdf, ppt, text, pictures, recordings, and sounds.

- All Search engine tools can give more exact outcomes.

- Generally, web indexes like Google, Bing, and Yahoo permit end clients to scan their substance-free of charge. In web indexes, there is no limitation connected with various inquiries, so all end clients invest a great deal of energy to look through significant substances to satisfy their necessities.

- Search engine tools permit us to utilize progressed search choices to get pertinent, significant, and useful outcomes.

- Progressed indexed lists make our inquiries more adaptable as well as refined.

- To look about training organization locales for B.Tech in software engineering designing, then, at that point, use “software engineering designing site:.edu.” to get the high-level outcome.

- Search engine tools permit us to look for pertinent substance in light of a specific watchword.

- For instance, a site “javatpoint” scores a higher quest for the expression “java instructional exercise” this is because a web crawler sorts its outcome pages by the importance of the substance; that is the reason we can see the most noteworthy scoring results at the highest point of SERP.

- Sometimes the Search engine invests in some opportunity to show significant, important, and useful substance.

- Search motors, particularly Google, now and again update their calculation, and it is truly challenging to track down the calculation in which Google runs.

- It makes end-clients easy as they record-breaking use web indexes to tackle their little inquiries too

- Search engine tools have become exceptionally mind-boggling PC programs. Their point of interaction might be straightforward however how they work and settle on choices is a long way from basic.

- The cycle begins with Crawling and Indexing. During this stage, the web index crawlers accumulate however much data as could be expected for every one of the sites that are freely accessible on the Internet.

- They find, interact, sort, and store this data in a configuration that can be utilized via web index calculations to settle on a choice and get the most ideal outcomes once again to the client.

- How much information they need to process is gigantic and the cycle is robotized. Human mediation is just done during the time spent planning the standards to be utilized by the different calculations however even this progression is bit by bit being supplanted by PCs through the assistance of man-made brainpower.

Introduction :-

Search Engine :-

A web index is a web-based replying mail, which is utilized to look, comprehend, and arrange content’s outcome in its information base in light of the pursuit inquiry (watchwords) embedded by the end-clients (web client). To show indexed lists, all web crawlers first track down the significant outcome from their data set, sort them to make an arranged rundown because of the pursuit calculation, and show before end clients. The method involved with getting sorted out content as a rundown is regularly known as a Search engine Results Page (SERP).

How treats Search Engine Do?

How Do Search Engines Work?

What is Search engine crawling?

Search engine tool positioning :-

At the point when somebody plays out a pursuit, Search engine tools scour their list for profoundly important substance and afterward arrange that substance with expectations of addressing the searcher’s question. This requesting of query items by importance is known as positioning. By and large, you can expect that the higher a site is positioned, the more pertinent the web crawler accepts that webpage is to the inquiry.

It’s feasible to impede Search engine crawlers from part or the entirety of your webpage or teach Search engine tools to try not to store specific pages in their file. While there can be explanations behind doing this, on the off chance that you need your substance found via searchers, you need to initially ensure it’s open to crawlers and is indexable. In any case, it’s pretty much as great as imperceptible.

Search Engine Components :-

For the most part, there are three fundamental parts of a Search engine tool as recorded underneath:

1. Web Crawler:

Web Crawler is otherwise called a Search engine tool bot, web robot, or web bug. It assumes a fundamental part in the website improvement (SEO) system. It is fundamentally a product part that crosses on the web, then, at that point, downloads and gathers all the data over the Internet.

2. Data set:

The web index data set is a kind of Non-social information base. It is where all the web data is put away. It has countless web assets. Some most well-known Search engine information bases are Amazon Elastic Search Service and Splunk. There are the accompanying two data set variable highlights that can influence the query items:

3. Search Interfaces:

Search Interface is one of the main parts of a Search engine . It is a point of interaction between the client and the data set. It fundamentally assists clients with looking for inquiries utilizing the information base There are the accompanying highlights Search Interfaces that influence the list items –

4. Positioning Algorithms:

The positioning calculation is utilized by Google to rank pages as indicated by the Google search calculation. There are the accompanying positioning highlights that influence the indexed lists –

How accomplish search engine tools work :-

There are the accompanying errands done by each Search engine tool –

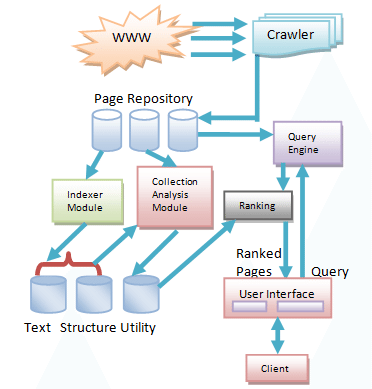

1. Crawling: Crawling is the principal stage in which an Search engine utilizes web crawlers to find, visit, and download the site pages on the WWW (World Wide Web). Slithering is performed by programming robots, known as “insects” or “crawlers.” These robots are utilized to survey the site content.

2. Ordering: Indexing is an internet-based library of sites, which is utilized to sort, store, and arrange the substance that we found during the Crawling. When a page is listed, it shows up because of the most important and most pertinent inquiry.

3. Positioning and Retrieval: Positioning is the last phase of the Search engine tool. It is utilized to give a piece of content that will be the most intelligent response in light of the client’s inquiry. It shows the best substance at the high level of the site.

Search Engine Working :-

A web crawler, information base, and the inquiry point of interaction are the significant part of a Search engine tool that makes Search engine work. Search engine tools utilize Boolean articulation AND, OR, NOT to limit and broaden the consequences of a pursuit. Following are the means that are performed by the Search engine tool:

Architecture :-

The web crawler design contains the three essential layers recorded beneath:

Search engine tool Processing :-

Indexing Process:

The Indexing process involves the accompanying three errands:

1. Text obtaining: It recognizes and stores archives for Indexing.

2. Text Transformation: It changes the archive into record terms or elements.

3. Index Creation: It takes record terms made by text changes and makes information designs to support quick looking.

To provide you with a thought of how matching functions, these are the main elements:

Title and content pertinence: How pertinent is the title and content of the page with the client inquiry.

A page that has a lot of references (backlinks), from different sites is viewed as more famous than different pages without any connections and hence has more possibilities in getting gotten by the calculations. This interaction is otherwise called Off-Page SEO.

That is only a glimpse of something larger. As referenced previously, Google involves more than 255 variables in their calculations to guarantee that its clients are content with the outcomes they get

The Scope of Search engine Optimization :-

Rundown of Top 10 Most Popular Search engines :-

1. Google

2. Bing

3. Yahoo

4. Ask..com – What’s Your Question?

5. News, Sports, Weather, Entertainment, Local and Lifestyle – AOL

6. Baidu

7. WolframAlpha

8. DuckDuckGo

9. Internet Archive

10. Yandex..ru

Advantages of Search engine :-

Looking through content on the Internet becomes one of the most well-known exercises from one side of the planet to the other. In the flow time, the Search engine tool is a fundamental piece of everybody’s life because the Search engine tool offers different well-known ways of viewing as significant, important, and instructive substance on the Internet.

A rundown of the benefits of web crawlers is given underneath –

1. Efficient:

Search engine tool assists us with saving time by the accompanying two different ways –

2. Assortment of data:

3. Accuracy:

4. Free Access:

5. Progressed Search:

6. Pertinence:

Disadvantage of Search engine :-

There are the accompanying weaknesses of Search engine s –

Certification :-

The expense of SEO preparation is variable: some initial, a couple of day meetings cost somewhere in the range of $1,100 to $4,000. Regularly, these classes just cover the rudiments, (for example, basically discussing what a catchphrase is – and perhaps plunging a little into jargon a touch more).