What is Docker?

Plainly put, Docker is an open-source technology used mostly for developing, shipping, and running applications. With it, you can isolate applications from their underlying infrastructure so that software delivery is faster than ever. Docker’s main benefit is to package applications in “containers,” so they’re portable for any system running the Linux operating system (OS) or Windows OS. Though container technology has been around for a while, the hype around Docker’s approach to containers has moved this approach to the mainstream as one of the most popular forms of container technology.

The brilliance of Docker is that, once you package an application and all its dependencies into a Docker run container, you ensure it will run in any environment. Also, DevOps professionals can build applications with Docker and ensure that they will not interfere with each other. As a result, you can build a container having different applications installed on it and give it to your QA team, which will then only need to run the container to replicate your environment. Therefore, using Docker tools saves time. In addition, unlike when using Virtual Machines (VMs), you don’t have to worry about what platform you’re using – Docker containers work everywhere.

What is Docker Container?

Now, your intrigue about Docker containers is no doubt piqued. A Docker container, as partially explained above, is a standard unit of software that stores up code and all its dependencies so the application runs fast and reliably from one computing environment to different ones. A Docker container image is a lightweight, standalone, executable package of software that has everything you need to run an application – code, runtime, system tools, system libraries, and settings.

Available for both Linux- and Windows-based applications, containerized software will always run the same, regardless of the infrastructure. Containers isolate software from its environment and ensure that it works uniformly despite differences.

Now that we have learned what is docker and docker container, we will extend our learning to the benefits of Docker containers in the next section.

What are the Benefits of Docker Containers?

Docker containers are popular now because they have Virtual Machines beat. VMs contain full copies of an operating system, the application, necessary binaries, and libraries – taking up tens of GBs. VMs can also be slow to boot. In contrast, Docker containers take up less space (their images are usually only tens of MBs big), handle more applications, and use fewer VMs and Operating Systems. Thus, they’re more flexible and tenable. Additionally, using Docker in the cloud is popular and beneficial. In fact, since various applications can run on top of a single OS instance, this can be a more effective way to run them.

Another distinct benefit of Docker containers is their ability to keep apps isolated not only from each other but also from their underlying system. This lets you easily dictate how an allocated containerized unit uses its system resources, like its CPU, GPU, and network. It also easily ensures data and code remain separate.

Docker Containers Enable Portability

A Docker container runs on any machine that supports the container’s runtime environment. You don’t have to tie applications to the host operating system, so both the application environment and the underlying operating environment can be kept clean and minimal.

You can readily move container-based apps from systems to cloud environments or from developers’ laptops to servers if the target system supports Docker and any of the third-party tools that might be used with it.

Docker Containers Enable Composability

Most business applications consist of several separate components organized into a stack—a web server, a database, an in-memory cache. Containers enable you to compose these pieces into a functional unit with easily changeable parts. A different container provides each piece so each can be maintained, updated, swapped out, and modified independently of the others.

Basically, this is the micro services model of application design. By dividing application functionality into separate, self-contained services, the model offers an alternative to slow, traditional development processes and inflexible apps. Lightweight, portable containers make it simpler to create and sustain micro services-based applications.

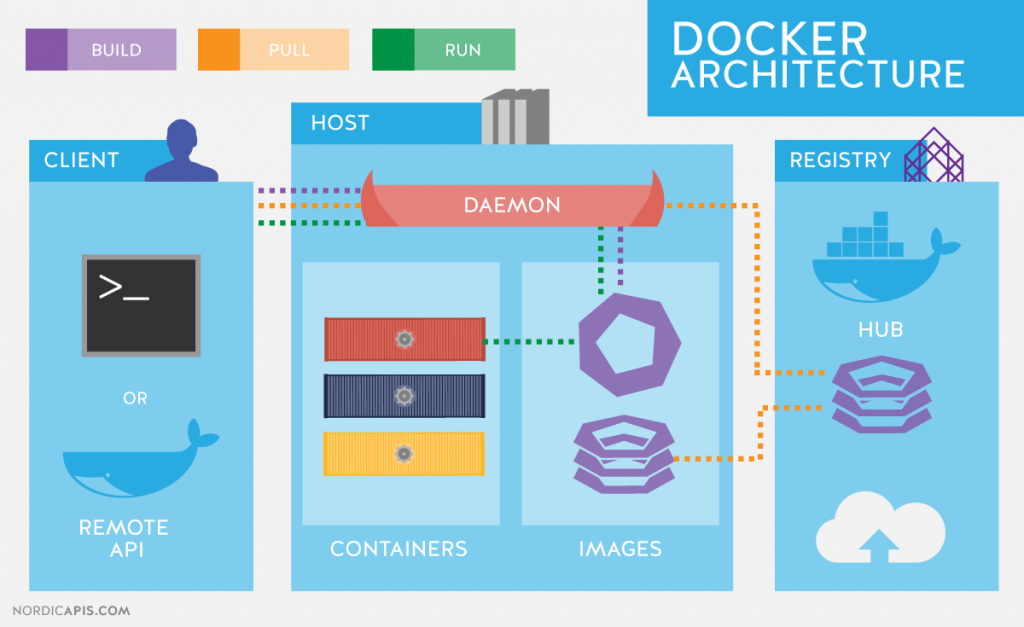

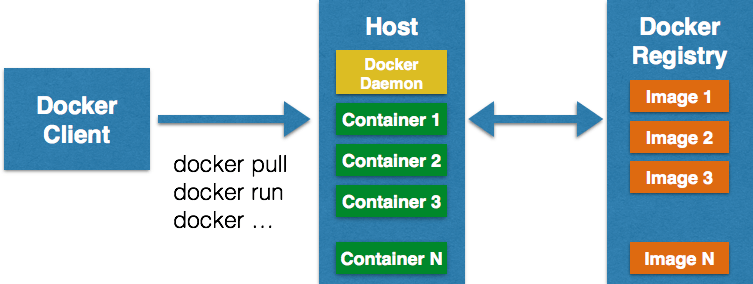

Docker architecture

Docker uses a client-server architecture. The Docker client talks to the Docker daemon, which does the heavy lifting of building, running, and distributing your Docker containers. The Docker client and daemon can run on the same system, or you can connect a Docker client to a remote Docker daemon. The Docker client and daemon communicate using a REST API, over UNIX sockets or a network interface.

The Docker daemon

The Docker daemon (dockerd) listens for Docker API requests and manages Docker objects such as images, containers, networks, and volumes. A daemon can also communicate with other daemons to manage Docker services.

The Docker client

The Docker client is the primary way that many Docker users interact with Docker. When you use commands such as (dockerd) run, the client sends these commands to (dockerd) , which carries them out. The docker command uses the Docker API. The Docker client can communicate with more than one daemon.

Docker registries

A Docker registry stores Docker images. Docker Hub is a public registry that anyone can use, and Docker is configured to look for images on Docker Hub by default. You can even run your own private registry.

When you use the dockerd pull or dockerd run commands, the required images are pulled from your configured registry. When you use the docker push command, your image is pushed to your configured registry.

Docker objects

When you use Docker, you are creating and using images, containers, networks, volumes, plugins, and other objects. This section is a brief overview of some of those objects.

IMAGES

An image is a read-only template with instructions for creating a Docker container. Often, an image is based on another image, with some additional customization. For example, you may build an image which is based on the ubuntu image, but installs the Apache web server and your application, as well as the configuration details needed to make your application run.

You might create your own images or you might only use those created by others and published in a registry. To build your own image, you create a Dockerfile with a simple syntax for defining the steps needed to create the image and run it. Each instruction in a Dockerfile creates a layer in the image. When you change the Dockerfile and rebuild the image, only those layers which have changed are rebuilt. This is part of what makes images so lightweight, small, and fast, when compared to other virtualization technologies.

CONTAINERSA

A container is a runnable instance of an image. You can create, start, stop, move, or delete a container using the Docker API or CLI. You can connect a container to one or more networks, attach storage to it, or even create a new image based on its current state.

By default, a container is relatively well isolated from other containers and its host machine. You can control how isolated a container’s network, storage, or other underlying subsystems are from other containers or from the host machine.

A container is defined by its image as well as any configuration options you provide to it when you create or start it. When a container is removed, any changes to its state that are not stored in persistent storage disappear.

Example docker run command

The following command runs an ubuntu container, attaches interactively to your local command-line session, and runs /bin/bash.

- $ docker run -i -t ubuntu /bin/bash

When you run this command, the following happens (assuming you are using the default registry configuration):

- If you do not have the ubuntu image locally, Docker pulls it from your configured registry, as though you had run docker pull ubuntu manually.

- Docker creates a new container, as though you had run a docker container create command manually.

- Docker allocates a read-write file system to the container, as its final layer. This allows a running container to create or modify files and directories in its local file system.

- Docker creates a network interface to connect the container to the default network, since you did not specify any networking options. This includes assigning an IP address to the container. By default, containers can connect to external networks using the host machine’s network connection.

- Docker starts the container and executes

/bin/bash. Because the container is running interactively and attached to your terminal (due to the-iand-tflags), you can provide input using your keyboard while the output is logged to your terminal. - When you type

exitto terminate the/bin/bashcommand, the container stops but is not removed. You can start it again or remove it.

SERVICES

Services allow you to scale containers across multiple Docker daemons, which all work together as a swarm with multiple managers and workers. Each member of a swarm is a Docker daemon, and all the daemons communicate using the Docker API. A service allows you to define the desired state, such as the number of replicas of the service that must be available at any given time. By default, the service is load-balanced across all worker nodes. To the consumer, the Docker service appears to be a single application. Docker Engine supports swarm mode in Docker 1.12 and higher.

The underlying technology

Docker is written in Go and takes advantage of several features of the Linux kernel to deliver its functionality.

Namespaces

Docker uses a technology called namespaces to provide the isolated workspace called the container. When you run a container, Docker creates a set of namespaces for that container.

These namespaces provide a layer of isolation. Each aspect of a container runs in a separate namespace and its access is limited to that namespace.

Docker Engine uses namespaces such as the following on Linux:

- The pid namespace: Process isolation (PID: Process ID).

- The net namespace: Managing network interfaces (NET: Networking).

- The ipc namespace: Managing access to IPC resources (IPC: InterProcess Communication).

- The mnt namespace: Managing filesystem mount points (MNT: Mount).

- The uts namespace: Isolating kernel and version identifiers. (UTS: Unix Timesharing System).

Control groups

Docker Engine on Linux also relies on another technology called control groups (cgroups). A cgroup limits an application to a specific set of resources. Control groups allow Docker Engine to share available hardware resources to containers and optionally enforce limits and constraints. For example, you can limit the memory available to a specific container.

Union file systems

Union file systems, or UnionFS, are file systems that operate by creating layers, making them very lightweight and fast. Docker Engine uses UnionFS to provide the building blocks for containers. Docker Engine can use multiple UnionFS variants, including AUFS, btrfs, vfs, and DeviceMapper.

Container format

Docker Engine combines the namespaces, control groups, and UnionFS into a wrapper called a container format. The default container format is libcontainer. In the future, Docker may support other container formats by integrating with technologies such as BSD Jails or Solaris Zones.

Containers are the organizational units of Docker. When we build an image and start running it; we are running in a container. The container analogy is used because of the portability of the software we have running in our container. We can move it, in other words, “ship” the software, modify, manage, create or get rid of it, destroy it, just as cargo ships can do with real containers.

In simple terms, an image is a template, and a container is a copy of that template. You can have multiple containers (copies) of the same image.

Below we have an image which perfectly represents the interaction between the different components and how Docker container technology works.

Conclusion:

Containers are virtual machines which share the same ”Operation System” being isolated from each other and the normal computing environment of your machine. Yet provides everything needed. Containers are the future of virtualization. How Docker Creates The Environment ?Hope you have found all the details that you were looking for, in this article.

LMS

LMS