The Latin expression “Ab Initio” means “from the beginning” or “from first principles.” “Ab Initio” is also the name of a well-known software suite that is used in the computing and data processing fields for data manipulation, batch processing, and analysis. Large-scale data processing tasks, especially those involving Extract, Transform, and Load (ETL) operations, are well-suited to the powerful and versatile Ab Initio software.

1. Explain the role of Co>Operating System in Ab Initio?

Ans:

In Ab Initio, Co>Operating System manages parallel processing, providing a platform-independent interface for communication between processes. It orchestrates data flow and parallel execution, ensuring efficient utilization of resources. This facilitates high-performance data processing and scalability in Ab Initio applications.

2. What is a DML in Ab Initio, and how is it different from a Schema?

Ans:

A Data Manipulation Language (DML) in Ab Initio defines the structure and layout of data records. It specifies the transformation rules for data processing. Unlike a Schema, which defines the metadata for data components, a DML focuses on transformations, defining how data is manipulated during processing.

3. Describe the significance of the “sandbox” in Ab Initio Continuous Flows.

Ans:

The “sandbox” in Ab Initio Continuous Flows serves as an isolated environment for testing and debugging purposes. It allows developers to simulate data transformations and processing logic before deploying changes to the production environment. This ensures the reliability and accuracy of data processing workflows.

4. Explain the concept of “Component,” and how is it utilized in Ab Initio graphs?

Ans:

In Ab Initio, a Component represents a functional unit in a graph, encapsulating a specific data processing task. Components include Input Table, Output Table, and various transformation functions. By connecting these components in a graph, developers define the data flow and processing logic, creating a visual representation of the entire data processing workflow.

5. Explain the concept of parallel processing in Ab Initio and its benefits.

Ans:

- Ab Initio achieves parallel processing by dividing tasks into smaller sub-tasks.

- Tasks are executed concurrently, optimizing resource utilization.

- Parallel processing enhances performance, especially with large datasets.

- The Co>Operating System manages parallel execution for scalability.

- Improved efficiency is achieved through optimized data distribution.

6. Describe the purpose of the “Sort” component in Ab Initio and its impact.

Ans:

- “Sort” arranges data based on specified key fields.

- Essential for certain operations but can impact performance.

- Increased processing time and resource utilization during sorting.

- Careful consideration needed to minimize performance overhead.

- Developers explore alternative strategies when sorting is not mandatory.

7. Explain the significance of “Scan” and “Rolling Hash” algorithms in Ab Initio.

Ans:

- “Scan” algorithm used for sequential data processing.

- Efficient for operations requiring sequential access.

- “Rolling Hash” algorithm employed for hash-based partitioning.

- Optimizes data distribution across nodes in parallel processing.

- Both algorithms play a crucial role in improving data processing efficiency.

8. Discuss the advantages and challenges of using “Multi Files” in handling large datasets.

Ans:

- “Multifiles” split large datasets into smaller, manageable files.

- Facilitates parallel processing and improves scalability.

- Challenges may arise in terms of file management and coordination.

- Careful design and configuration needed for optimal performance.

- Balancing performance optimization and efficient data handling is crucial.

9. Discuss the role of “Lookups” in Ab Initio. How do they enhance data processing?

Ans:

Lookups in Ab Initio are essential for enriching data by matching records from different data sources. They enable the integration of reference data into the processing pipeline, enhancing the quality and completeness of the output. Lookups can be configured to perform various types of joins, such as inner, outer, and equality joins, providing flexibility in data integration scenarios.

10. What are the advantages of using “Departition Components” in Ab Initio Parallel Processing?

Ans:

Departition Components in Ab Initio facilitate the consolidation of data streams, allowing developers to merge parallel processing results into a single output. This is crucial for scenarios where data needs to be aggregated or consolidated after parallel execution. Departition Components optimize the final output and ensure coherence in the processed data.

11. Explain the purpose of the “Rollup” function in Ab Initio Transformations.

Ans:

The “Rollup” function in Ab Initio is employed to aggregate and summarize data at different levels of granularity. It allows developers to perform calculations such as sum, average, or count on grouped data. This function is particularly useful in data warehousing scenarios where summarization of large datasets is necessary for reporting and analysis.

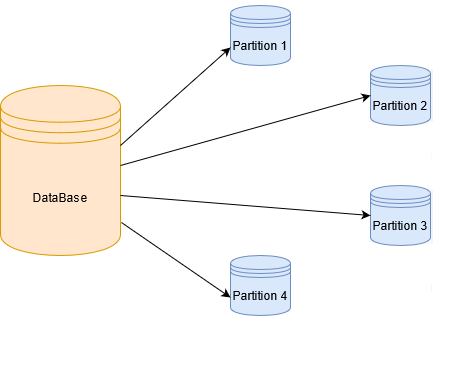

12. Explain how Ab Initio handles data partitioning strategies.

Ans:

- Ab Initio supports hash, round-robin, and key range partitioning.

- Choice depends on data characteristics and processing requirements.

- Hash partitioning ensures even distribution of data.

- Round-robin is suitable for uniform workload distribution.

- Key range partitioning optimizes processing for specific key ranges.

13. Describe the roles of “Departition” and “Concatenate” components in parallel processing.

Ans:

- “Departition” consolidates data streams after parallel processing.

- Ensures a unified output for downstream processing.

- “Concatenate” combines multiple input data streams into a single output.

- Vital for result consolidation in parallel processing scenarios.

- Enables aggregation, merging, or organization of data for downstream tasks.

14. Discuss the purpose of the “EME” (Enterprise Metadata Environment) in Ab Initio

Ans:

- EME serves as a central repository for storing metadata.

- Facilitates metadata discovery, lineage tracking, and impact analysis.

- Enhances collaboration, governance, and maintenance of workflows.

- Provides a unified view of metadata across the organization.

- Supports transparency and accountability in metadata management.

15. Describe the significance of “Phases” in Ab Initio Continuous Flows.

Ans:

Phases in Ab Initio Continuous Flows represent distinct stages in the execution of a data processing workflow. They include Initialization, Execution, and Cleanup phases. These phases ensure proper setup, execution, and resource release, enhancing the reliability and efficiency of the entire workflow. Developers can customize each phase to meet specific requirements and optimize performance.

16. What is the role of “Project and Sandbox Parameters” in Ab Initio?

Ans:

Project and Sandbox Parameters in Ab Initio provide a mechanism for configuring and managing environment-specific settings. Project Parameters define global settings for an entire project, while Sandbox Parameters allow developers to customize settings for a specific development or testing environment. This separation ensures flexibility and consistency across different stages of the development lifecycle.

17. How does Ab Initio handle data format conversions, and what challenges may arise.

Ans:

- Ab Initio supports data format conversions through various components.

- Challenges may arise with complex data structures and non-standard formats.

- Careful mapping and transformation needed for accurate conversions.

- Consideration of precision, scale, and encoding is crucial.

- Ensures reliable and precise data format conversions during processing.

18. Explain the role of “Conditional Components” in Ab Initio graphs and their impact.

Ans:

- Conditional Components introduce logic based on runtime conditions.

- Enables dynamic workflow execution in response to real-time data conditions.

- Facilitates decision-making within the processing pipeline.

- Allows developers to design adaptive workflows.

- Enhances versatility in handling diverse data processing scenarios.

19. Discuss considerations for designing efficient error handling mechanisms in Ab Initio graphs:

Ans:

- Components like “Reformat” and “Error Table” are configured for error handling.

- Developers define appropriate error thresholds for capturing errors.

- Determine error handling strategies for comprehensive logging.

- Ensure the robustness of data processing workflows.

- Timely identification and resolution of issues to maintain data quality.

20. Explain the purpose of the “Rollback” feature in Ab Initio.

Ans:

The “Rollback” feature in Ab Initio allows developers to undo changes made during the execution of a graph or data processing workflow. It is particularly useful in scenarios where errors are encountered during processing, ensuring that the system returns to its previous state. This feature enhances the robustness of data processing workflows by providing a mechanism to handle unexpected issues without compromising data integrity.

21. How does Ab Initio handle metadata management

Ans:

Ab Initio employs a metadata-driven approach for managing and governing data processing workflows. Metadata, describing the structure and characteristics of data, is stored centrally. This approach enhances transparency, traceability, and data lineage, enabling better governance and maintenance. Changes to metadata automatically propagate through the system, reducing manual effort and minimizing the risk of inconsistencies.

22. Explain the role of “Subgraphs” in Ab Initio and their contributions to graph design.

Ans:

- Subgraphs encapsulate and reuse sets of components as modular units.

- Promote a scalable and maintainable design approach.

- Standardized subgraphs can be replicated across different projects.

- Enhance code reusability and reduce redundancy.

23. What distinguishes an Ab Initio “rollup” from a “scan”?

Ans:

| Aspect | Rollup | Scan | |

| Functionality | Computes summary values by aggregating data according to predetermined key fields. | Reads and handles data in a step-by-step manner without aggregating. | |

| Usage | Frequently employed for data summarization tasks like computing averages or totals. | Used to process records in a sequential manner without aggregating them. | |

| Sorting Requirement | Requires that before using the rollup, the data be sorted according to important fields. | Handles records in the current order; pre-sorting of data is not necessary. | |

| Performance | Possibly requiring more resources because of the sorting and aggregation processes. | Faster in general when handling records one after the other without aggregation. | |

| Example Scenario | Utilizing sorted data to calculate total sales for every product category. | Examining a dataset to find and mark entries that satisfy particular requirements. |

24.Discuss the concept of “Data Profiling” in Ab Initio.

Ans:

- Data Profiling involves analyzing data sources for structure and quality.

- Identifies anomalies, inconsistencies, and potential issues.

- Ensures data quality by addressing challenges early in development.

- Informs decisions about transformations and data handling.

- Supports the overall goal of maintaining high-quality data.

25. Explain the significance of the “EME” (Enterprise Metadata Environment) in Ab Initio.

Ans:

The Enterprise Metadata Environment (EME) in Ab Initio serves as a centralized repository for storing and managing metadata related to data processing workflows. It provides a collaborative platform where multiple developers can work on projects concurrently. EME facilitates version control, ensuring that changes made by different developers are tracked, documented, and can be rolled back if necessary.

26. Explain the purpose of “Reusability” in Ab Initio graphs.

Ans:

- Reusability emphasizes creating generic components and graphs.

- Streamlines development by eliminating the need to recreate common functionalities.

- Developers leverage reusable components to expedite the development process.

- Ensures consistency and enhances maintainability.

27. How does Ab Initio handle error handling and logging in data processing workflows?

Ans:

Ab Initio offers robust error handling mechanisms through features like Rejection Handling and Error Logging. Developers can configure graphs to capture and log errors, allowing for comprehensive error analysis and troubleshooting. This ensures that data quality is maintained, and issues are promptly identified and addressed during the processing workflow.

28. Discuss the significance of “Data Sets” in Ab Initio.

Ans:

- Data Sets represent logical views of data, abstracting physical storage details.

- Provide a level of indirection for defining and manipulating data.

- Enhance flexibility in data handling.

- Simplify changes to storage configurations without affecting processing logic.

29. Explain the purpose of “Partition Components” in Ab Initio Parallel Processing.

Ans:

Partition Components in Ab Initio are used to divide large datasets into smaller partitions for parallel processing. By distributing data across multiple nodes, these components optimize resource utilization and improve overall performance. Developers can choose from various partitioning techniques based on the characteristics of the data, ensuring efficient and scalable processing in parallel.

30. What advantages does parameterization offer in graph development?

Ans:

- Ab Initio supports parameterization for configurable values in graphs.

- Enables flexibility and adaptability in graph design.

- Parameters can be adjusted without modifying the underlying code.

- Enhances maintainability and facilitates customization for different environments.

31. Discuss the concept of “Generic Graphs” in Ab Initio.

Ans:

Generic Graphs in Ab Initio allow developers to create reusable templates for common data processing tasks. These templates can be parameterized, enabling customization for specific scenarios. This promotes a modular and scalable design approach, where standardized components can be easily replicated and adapted across different projects, reducing development time and ensuring consistency.

32. Explain the use of “Inter-Component Communication” in Ab Initio graphs.

Ans:

Inter-Component Communication in Ab Initio allows components within a graph to exchange information dynamically during runtime. This enhances flexibility by enabling conditional processing and dynamic decision-making based on the output of upstream components. Developers can design more adaptive and responsive workflows, tailoring processing logic based on real-time data conditions.

33. What is the purpose of “EmePublish” in Ab Initio?

Ans:

- Emepublish is a utility facilitating metadata deployment from EME.

- Propagates updated metadata definitions to relevant environments.

- Maintains consistency across development, testing, and production stages.

- Streamlines the deployment process for metadata changes.

34. Discuss the role of “Data Transformation Language (DTL)” in Ab Initio.

Ans:

The Data Transformation Language (DTL) in Ab Initio provides a declarative language for specifying data transformations. It simplifies complex transformations by abstracting the underlying logic, making it easier for developers to express their intent without delving into low-level implementation details. DTL promotes readability, maintainability, and efficient development of sophisticated data processing logic.

35. Discuss the challenges and considerations when handling SCDs in Ab Initio.

Ans:

- Slowly changing dimensions present challenges in maintaining historical data integrity.

- Developers must choose appropriate SCD strategies (Type 1, Type 2, Type 3).

- Considerations include data versioning, key management, and impact on performance.

36. How does Ab Initio support data parallelism?

Ans:

Ab Initio leverages data parallelism to process large datasets concurrently across multiple nodes. When designing for parallel processing, considerations such as data partitioning, load balancing, and synchronization become crucial. Developers must optimize the distribution of data to ensure efficient parallel execution, avoiding bottlenecks and maximizing the benefits of parallelism.

37. Explain the concept of “EmePublish” in Ab Initio.

Ans:

Emepublish in Ab Initio is a utility that facilitates the deployment of metadata changes made in the Enterprise Metadata Environment (EME). It ensures that updated metadata definitions are propagated to relevant environments, maintaining consistency across development, testing, and production stages.

38. Explain the concept of “Continuous Flows” in Ab Initio.

Ans:

- Continuous Flows are designed for real-time, streaming data scenarios.

- Provide immediate processing as data arrives.

- Differ from traditional batch processing operating on scheduled intervals.

- Support dynamic, event-driven processing for real-time response.

39. How does Ab Initio handle metadata lineage?

Ans:

- Ab Initio captures metadata lineage by tracking data flow from source to destination.

- Crucial for data governance, providing transparency into transformations.

- Supports compliance, traceability, and accountability in data processing.

- Ensures adherence to regulatory requirements and best practices.

40. Discuss the importance of “Checkpoints” in Ab Initio graphs.

Ans:

Checkpoints in Ab Initio graphs allow developers to define recovery points within a workflow. In the event of a failure, the system can restart processing from the nearest checkpoint, minimizing the impact of interruptions. This enhances fault tolerance and ensures that data processing workflows can recover gracefully from unexpected errors, contributing to the overall reliability of the system.

41. Explain the purpose of “Continuous” and “Batch” modes in Ab Initio Continuous Flows.

Ans:

Ab Initio Continuous Flows support both Continuous and Batch processing modes. Continuous mode is suitable for real-time, streaming data scenarios, enabling immediate processing as data arrives. Batch mode, on the other hand, is designed for traditional batch processing, allowing developers to process large volumes of data at scheduled intervals.

42. How does Ab Initio handle data encryption in data processing workflows?

Ans:

Ab Initio provides robust features for data encryption and security, ensuring the confidentiality and integrity of sensitive information. Encryption algorithms can be applied at various levels, including data transport and storage.

43. Discuss the advantages and challenges of using “Reformat” components in Ab Initio graphs.

Ans:

- “Reformat” components are essential for data transformations in Ab Initio.

- Offer flexibility and expressiveness in designing transformation logic.

- Challenges may arise in complex transformation scenarios.

- Requires careful consideration of performance implications.

44. What is the significance of “Concurrent Versions System (CVS)” integration.

Ans:

- Ab Initio integrates with CVS for effective version control.

- CVS manages changes, tracks revisions, and facilitates collaboration.

- Ensures a systematic approach to versioning in development.

- Enables developers to roll back changes if necessary.

45. What is the significance of the “Sort” component in Ab Initio.

Ans:

The “Sort” component in Ab Initio is used to arrange data in a specified order based on one or more key fields. While sorting is essential for certain operations, it can impact performance due to increased processing time and resource utilization. Developers should carefully consider the necessity of sorting and explore alternative strategies to minimize performance overhead.

46. Explain the concept of “Local and Global Lookups” in Ab Initio.

Ans:

- Local Lookups reference smaller datasets for optimized performance.

- Minimizes data transfer and enhances lookup efficiency.

- Global Lookups involve larger datasets and may impact performance.

- Developers must choose based on reference data characteristics.

47. Discuss considerations and best practices for optimizing Ab Initio graphs for parallel execution

Ans:

- Optimize data partitioning for even distribution and efficient parallel processing.

- Consider load balancing to ensure equitable resource utilization.

- Minimize data movement between nodes for enhanced performance.

- Utilize parallel components effectively to maximize parallelism benefits.

48. How does Ab Initio handle metadata lineage?

Ans:

Ab Initio captures metadata lineage by tracking the flow of data from source to destination in a processing workflow. This lineage information is crucial for data governance, providing transparency into how data is transformed and utilized. By understanding the lineage, organizations can ensure compliance, traceability, and accountability in data processing workflows, aligning with regulatory requirements and best practices for data management.

49. Discuss the advantages and challenges of using “Reformat” components.

Ans:

The “Reformat” component in Ab Initio is essential for data transformations, offering flexibility and expressiveness. However, challenges may arise in complex transformation scenarios, requiring careful consideration of performance implications and efficient use of functions.

50. Explain the role of “Departition” component in Ab Initio parallel processing.

Ans:

- “Departition” consolidates data streams after parallel processing.

- Ensures a unified output for downstream processing.

- Essential for transforming parallelized data back into a cohesive format.

- Supports aggregation, merging, or further processing of parallelized results.

51. Discuss the use of “Lookup File” and “Dedup Sorted” components in Ab Initio.

Ans:

The “Lookup File” component in Ab Initio is employed for referencing external datasets, enhancing data enrichment. Meanwhile, the “Dedup Sorted” component eliminates duplicate records from sorted data. By incorporating these components into data processing workflows, developers ensure data accuracy, completeness, and consistency, contributing to overall data quality assurance.

52. Explain the role of “Scan” and “Rolling Hash” algorithms in Ab Initio.

Ans:

The “Scan” algorithm in Ab Initio is used for sequential data processing, while the “Rolling Hash” algorithm is employed for hash-based partitioning in parallel processing. These algorithms play a crucial role in optimizing data processing efficiency. The Scan algorithm ensures sequential access, while the Rolling Hash algorithm enhances data distribution across nodes, minimizing bottlenecks and improving parallel execution.

53. Explain the purpose of “Continuous>Partition” and its significance in real-time processing.

Ans:

- “Continuous>Partition” facilitates parallel processing in real-time scenarios.

- Essential for handling continuous data streams and distributing workload.

- Optimizes resource utilization in dynamically changing data environments.

- Supports immediate processing of incoming data for real-time responsiveness.

- Ensures scalability and efficiency in handling streaming data.

54. Discuss the challenges and strategies for handling complex file formats in Ab Initio

Ans:

- Complex file formats, like Avro or Parquet, pose challenges in processing.

- Developers must implement custom parsing logic for non-standard formats.

- Consideration of data structure and encoding is crucial for accurate processing.

- Integration of external libraries may be necessary for handling specific formats.

- Testing and validation procedures should be comprehensive to ensure compatibility.

55. What should be taken into account when choosing a partitioning approach?

Ans:

Ab Initio provides various data partitioning strategies, including hash, round-robin, and key range partitioning. The choice depends on data characteristics and processing requirements. Hash partitioning ensures even distribution, round-robin is suitable for uniform workload, and key range partitioning optimizes processing for specific key ranges. Developers should carefully analyze data distribution patterns and workload to select the most appropriate partitioning strategy for optimal performance.

56. Explain the role of “Trash” component in Ab Initio and its significance in error handling.

Ans:

- “Trash” component is used for discarding unwanted or erroneous data.

- Redirects data to a designated discard file or stream.

- Facilitates effective error handling by isolating problematic records.

- Ensures that processing continues smoothly despite encountering errors.

- Developers configure “Trash” components to maintain data integrity in workflows.

57. Describe the considerations for implementing version control in Ab Initio projects.

Ans:

- Integration with version control systems like CVS or Git is essential.

- Developers must commit changes with meaningful comments for documentation.

- Branching and merging strategies should be well-defined for collaborative development.

- Regularly updating local copies with the latest changes from the repository is crucial.

- Version control ensures a systematic approach to managing project revisions.

58. Explain the purpose of “Redefine” in Ab Initio and its role.

Ans:

“Redefine” is used to redefine the structure of a data record. It allows developers to change the data type or length of fields. Facilitates flexible data transformations by modifying record structures. Crucial for adapting data to meet changing business requirements. “Redefine” components are strategically placed within graphs for targeted transformations.

59. Explain the purpose of the “Gather” component in Ab Initio.

Ans:

- “Gather” component consolidates data streams from parallel processes.

- Merges results to form a cohesive output for downstream processing.

- Ensures that data processed in parallel is appropriately combined.

- Facilitates aggregation or further transformations on the consolidated data.

- Essential for maintaining data integrity in parallelized workflows.

60. Discuss the advantages and challenges of using “Parallel Run”.

Ans:

- “Parallel Run” allows running multiple instances of a graph simultaneously.

- Facilitates parallel execution for increased throughput.

- Challenges may include managing resource contention in parallel runs.

- Developers must carefully design and configure parallel run instances.

- Enhances performance when appropriately utilized in scenarios with parallelizable tasks.

61. Discuss the considerations for handling complex transformations in Ab Initio graphs.

Ans:

Complex transformations may involve multiple components and logic. Developers must prioritize accuracy while ensuring optimal performance. Extensive testing is crucial to validate the correctness of complex transformations. Documentation of transformation logic and business rules is essential for maintainability. Collaboration between development and business stakeholders is key for successful implementation.

62. Explain the role of “Multi Files” in Ab Initio.

Ans:

“Multifiles” divide large datasets into smaller, manageable files. Facilitates parallel processing for improved scalability. Challenges may arise in terms of file management and coordination. Requires careful design and configuration for optimal performance. Balancing performance optimization and efficient data handling is crucial.

63. Discuss the considerations for implementing data encryption in Ab Initio

Ans:

Encryption involves securing sensitive data during transmission or storage. Ab Initio supports encryption through components like “Encrypt” or external libraries. Developers must choose appropriate encryption algorithms based on security requirements. Key management is crucial for secure encryption and decryption processes. Rigorous testing and validation are necessary to ensure the effectiveness of encryption mechanisms.

64. Explain the role of “Join” components in Ab Initio.

Ans:

- “Join” components are used to combine data from multiple sources based on specified conditions.

- Optimizing join operations involves selecting appropriate join strategies.

- Hash joins are efficient for large datasets, while merge joins are suitable for sorted data.

- Careful consideration of data distribution and key fields is crucial for optimal performance.

- Developers must analyze joint strategies to minimize data movement and improve efficiency.

65. Discuss the significance of “Look-Ahead Partitioning” in Ab Initio parallel processing.

Ans:

- “Look-Ahead Partitioning” optimizes data distribution by anticipating future processing needs.

- The system evaluates the characteristics of data partitions and adjusts for efficient execution.

- Ensures better load balancing and minimizes the need for data repartitioning.

- Improves overall performance by anticipating and accommodating future processing demands.

- Careful consideration of data patterns is crucial for effective implementation.

66. Describe the purpose of “Repartition” in Ab Initio parallel processing.

Ans:

“Repartition” redistributes data across nodes in parallel processing. Essential for achieving an even workload distribution and optimal resource utilization. Improper use may lead to skewed data distribution, impacting performance. Developers must choose appropriate repartitioning strategies based on data characteristics. Proper configuration of “Repartition” ensures efficient parallel execution in Ab Initio graphs.

67. Explain the purpose of “Checkpoints” in Ab Initio and their role.

Ans:

“Checkpoints” mark specific points in a graph for resumption in case of failures. Ensures data processing resumes from the last successfully processed checkpoint. Crucial for maintaining reliability and consistency in long-running processes. Developers strategically place checkpoints to minimize data reprocessing. Enhances fault tolerance and robustness in Ab Initio data processing workflows.

68. Discuss the purpose of “Co>Operating System” in Ab Initio and its role in parallel processing.

Ans:

- “Co>Operating System” manages and controls parallel execution in Ab Initio.

- Essential for optimizing resource utilization and orchestrating parallel workflows.

- Coordinates communication between various components and nodes in parallel processing.

- Ensures efficient data distribution and collection during parallel execution.

- “Co>Operating System” is integral to achieving scalability and performance in Ab Initio.

69. Explain the purpose of “Breath-First Search” and “Depth-First Search” algorithms.

Ans:

- “Breath-First Search” and “Depth-First Search” determine the execution order of components.

- “Breath-First Search” processes components level by level, ensuring a systematic order.

- “Depth-First Search” explores components in a hierarchical depth-first manner.

- Developers choose the algorithm based on workflow requirements and dependencies.

- Proper selection of search algorithms ensures effective and efficient graph execution.

70. Discuss the considerations for handling large-scale data migration projects in Ab Initio:

Ans:

Large-scale data migration involves moving vast amounts of data between systems. Thorough analysis of source and target systems is essential for mapping data accurately. Incremental migration strategies can minimize downtime and optimize resource usage. Data validation procedures must be comprehensive to ensure accuracy post-migration. Collaboration between development, operations, and business teams is crucial for success.

71. Describe the considerations for implementing data compression in Ab Initio.

Ans:

Data compression reduces the size of data for efficient storage and transmission. Ab Initio supports compression through components like “Compress” or external libraries. Developers must choose compression algorithms based on performance and space requirements. Compression ratios and decompression efficiency are crucial factors for consideration. Rigorous testing and validation are necessary to ensure the effectiveness of compression mechanisms.

72. Explain the role of “Partition By Key” and “Sort” components.

Ans:

- “Partition By Key” optimizes parallel processing by grouping data based on key fields.

- Ensures even distribution of data across nodes for efficient workload balancing.

- “Sort” component arranges data based on specified key fields, impacting performance.

- Developers must carefully consider the necessity of sorting and its implications.

- Balancing the benefits of “Partition By Key” and potential sorting overhead is crucial for optimization.

73. Discuss the challenges for handling data quality assurance in Ab Initio projects

Ans:

- Ensuring data accuracy, completeness, and consistency is a complex task.

- Components like “Validate Records” and “Check Table” are configured for quality checks.

- Developers define appropriate validation rules and error thresholds.

- Comprehensive logging and reporting mechanisms are crucial for error handling.

- Timely identification and resolution of data quality issues are essential for maintaining robust workflows.

74. What does Ab Initio mean to you?

Ans:

The Latin phrase “Ab Initio” means “from the beginning” or “from first principles.” Within the computing domain, it designates a software suite renowned for its proficiency in data processing, data analysis, and ETL (Extract, Transform, Load) procedures. With the help of Ab Initio’s graphical development environment, users can design and implement data processing workflows and produce scalable, effective solutions for managing massive amounts of data.

75. What Is It Ab Initio Software?

Ans:

A full range of data processing, ETL, and integration tools is referred to as Ab Initio Software. With the help of its graphical development environment, users can visually design and implement data workflows. Ab Initio is renowned for its capacity for parallel processing, scalability, and adaptability when handling challenging data integration projects. It is extensively utilized in fields that work with complex and sizable datasets to carry out operations like loading, cleansing, and data transformation.

76. Which sectors make use of Ab Initio the most?

Ans:

Ab Initio is widely utilized in many different industries that deal with large volumes of data and need reliable ETL and data integration solutions. Among the sectors that use Ab Initio frequently are:

- Finance

- Telecommunications

- Healthcare

- Retail

- Manufacturing

77. What are the applications made by Ab Initio Software used for?

Ans:

In the area of data processing and integration, Ab Initio Software applications have multiple uses.

- ETL Processes: A lot of people use Ab Initio to design and run ETL processes.

- Data Quality Assurance: By offering instruments for data enrichment, validation, and cleansing, Ab Initio applications support data quality assurance.

- Parallel Processing: By dividing up processing duties among several servers, Ab Initio’s parallel processing capabilities allow for the effective handling of massive datasets.

- Metadata Management: Users can capture and manage metadata related to data processing workflows with the software’s powerful metadata management features.

78. What background information do you have on Ab Initio Software?

Ans:

1995 saw the founding of Ab Initio Software Corporation, a software firm with a focus on high-performance data integration and management solutions by Sheryl Handler. Extract, Transform, and Load (ETL) processes and data warehousing are two common uses for the company’s flagship product, Ab Initio Co>Operating System.

79. Which architectural components are most important in Abinitio?

Ans:

- Creating Ab Initio graphs is primarily done with the Graphical Development Environment (GDE).

- Operating System: Ab Initio runtime environment for graph execution.

- Ab Initio application-related metadata management and storage are handled by Enterprise Meta>Environment (EME).

- With the aid of a data profiler, data sources can be examined and characterized.

- Perform>It: A tool for scheduling and tracking Ab Initio processes.

80. What do you make of Abinitio’s dependency analysis?

Ans:

Dependency analysis, as used in Ab Initio, is the process of understanding and managing the relationships between various components in a data processing task. It helps identify dependencies between data sets, processes, and transformations to ensure correct execution sequence and data flow.

81. In Abinitio, what does data encoding mean?

Ans:

In Ab Initio, “data encoding” refers to the act of utilizing a particular character set or encoding scheme to represent and store data. It guarantees accurate data processing and interpretation, particularly when working with internationalized and multilingual data.

82. Which file extension types are available in Abinitio?

Ans:

- ..mp: The graph definition is contained in an Ab Initio graph file

- .dml: A file in Data Manipulation Language that describes the organization of data records.

- .out is the output file produced by Ab Initio processes;

- .dbc is a database connection file that contains information to connect to a database.

83. What data is required to establish a database connection provided by a.dbc file extension?

Ans:

In Ab Initio, a.dbc file holds the metadata required to create a connection to a database. It contains information about the kind of database, the connection URL, the username, the password, and other configuration parameters needed to connect to the designated database system.

84. Which kinds of parallelism are present in Abinitio?

Ans:

- Component parallelism is the practice of running several instances of a component concurrently on the same set of data.

- Data parallelism is the process of processing multiple components or processes in parallel to handle different sets of data.

- Pipeline Parallelism: Permits the pipeline-style concurrent execution of several components.

85. Which kinds of parallelism are present in Abinitio?

Ans:

- Dedup Component: Based on designated key fields, this component eliminates duplicate records from a dataset.

- Replicate Component: Enables parallel processing or data replication by producing multiple identical copies of a dataset.

86. What does the term “partition” mean to you? Which kinds of partition components are there in Abinitio?

Ans:

Definition: In Ab Initio, a partition is the division of data into partitions or subsets for processing in parallel.

Partition Component Types:

- Round Robin Partitioning: This method divides data equally among designated partitions.

- Hash Partition: Distributes data according to a key using a hash function.

- Sends a copy of the complete dataset to every processing component through broadcast partitioning.

87. What do you mean by errors related to overflow?

Ans:

Definition: When data fills a data structure or field that is larger than intended, an overflow error occurs.

- Cause: Data truncation or loss due to insufficient space allotted for a data field or buffer.

- Preventive measures: To prevent data loss, appropriately size data structures and manage overflow situations.

88. Which air commands are available in Abintio?

Ans:

- air runs a graph in Ab Initio.

- breath: Offers details on the Ab Initio surroundings.

- oversees the environment of the Ab Initio Co>Operating System.

- gde: Opens the Graphical Development Environment for creating Ab Initio graphs.

- m_dump: Shows metadata information about an Ab Initio graph.

- Ab Initio command line interpreter for running scripts and commands is called msh.

89. What does Abinitio’s m_dump syntax serve as?

Ans:

Syntax

- m_dump -t <`graph_type`> -x <`graph_name`>

Use: In Ab Initio, the `m_dump` command is used to show metadata for a given graph. It offers specifics like input/output ports, transformation logic, and components used.

90. What does Abinitio’s Sort Component mean to you?

Ans:

Functionality: Ab Initio’s Sort component is used to sort records according to one or more designated key fields.

Important characteristics:

- Allows for both descending and ascending sorting.

- Supports various data types for key fields.

- Makes it easier to get rid of duplicate records when sorting.