- Introduction to Generative AI

- Fundamentals of AI and Machine Learning

- Understanding Generative Models

- Deep Dive into Transformers (GPT, BERT)

- Working with Large Language Models (LLMs)

- Tools and Frameworks

- Building Your First Generative AI Project

- Ethics, Risks, and Future of Generative AI

- Conclusion

Introduction to Generative AI

Generative AI is a rapidly growing branch of artificial intelligence that focuses on creating new and meaningful content such as text, images, audio, and even code by learning patterns from large datasets. Unlike traditional AI systems that are designed mainly for analysis or prediction, generative models can produce original outputs that closely resemble human creativity and thinking. Advanced technologies like GPT and BERT have significantly contributed to this progress by enabling machines to understand and generate natural language with high accuracy. Generative AI is now widely applied in areas such as chatbots, virtual assistants, content generation, design, and software development. As its adoption continues to grow across industries, the importance of Gen AI Training is increasing, equipping individuals with the knowledge and practical skills required to effectively build, use, and manage modern AI-driven solutions.

Fundamentals of AI and Machine Learning

Fundamentals of AI and Machine Learning form the foundation for understanding how intelligent systems are designed and developed. Artificial Intelligence refers to the ability of machines to perform tasks that typically require human intelligence, such as reasoning, learning, and decision-making. Machine Learning, a subset of AI, focuses on enabling systems to learn from data and improve performance without being explicitly programmed. It includes approaches like supervised learning, where models are trained on labeled data, and unsupervised learning, which identifies hidden patterns in unlabeled data. Deep learning, powered by neural networks, further enhances this capability by processing large and complex datasets. Tools and frameworks such as TensorFlow and PyTorch are widely used to build and train these models efficiently. Understanding these core concepts is essential for anyone pursuing Gen AI Training and looking to develop advanced AI-driven applications.

Ready to earn your Gen AI Professional Certification? Discover the Gen AI Course now available at ACTE!

Understanding Generative Models

- 1. What are Generative Models: Generative models are a class of machine learning models designed to create new data samples that resemble the training data. They learn the underlying patterns and distributions, enabling them to generate realistic outputs such as text, images, or audio.

- 2. How Generative Models Work: These models analyze large datasets to understand patterns and relationships within the data. By learning probability distributions, they can generate new content that follows similar structures, making the outputs appear natural and meaningful.

- 3. Types of Generative Models: There are several types of generative models, including Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs), and transformer-based models like GPT, each suited for different tasks and data types.

- 4. Training Process of Generative Models: Training in Robotics and AI involves feeding large datasets into the model so it can learn patterns and features for intelligent robotic systems. The process requires significant computational power and optimization techniques to ensure outputs are accurate, realistic, and suitable for real-world automation.

- 5. Applications of Generative Models: Generative models are widely used in content creation, image synthesis, chatbots, music generation, and more. They help automate creative processes and improve productivity across industries such as media, healthcare, and technology.

- 6. Challenges in Generative Models: Despite their capabilities, generative models face challenges such as bias in data, high computational requirements, and difficulty in controlling outputs. Addressing these issues is important for building reliable and ethical AI systems.

Get Your Gen AI Certification by Learning from Industry-Leading Experts and Advancing Your Career with ACTE’s Gen AI Course.

Deep Dive into Transformers (GPT, BERT)

Transformers are an advanced deep learning architecture within the broader field of Artificial Neural Networks that has significantly transformed natural language processing by enabling machines to understand and generate human language with high accuracy. Unlike traditional models such as recurrent neural networks, transformers process entire sequences of data in parallel, making them faster and more efficient. Their core innovation lies in the attention mechanism, which allows the model to focus on important words in a sentence while understanding relationships between all words, regardless of position. This helps capture context effectively, even in long and complex sentences. Models like GPT generate coherent text, while BERT is optimized for deep bidirectional understanding. These models power chatbots, assistants, translation, content creation, and search systems, forming a foundation of modern Generative AI.

Working with Large Language Models (LLMs)

- 1. Introduction to LLMs: Large Language Models (LLMs) are advanced AI systems designed to understand and generate human-like text. They are trained on massive datasets to learn grammar, context, and meaning, enabling them to perform tasks like writing, summarizing, and answering questions effectively.

- 2. Training Process of LLMs: LLMs are trained using deep learning on large volumes of text data. The model learns patterns, relationships, and context between words through self-supervised learning. This requires high computational power and optimization techniques for better accuracy.

- 3. Tokenization and Embeddings: Tokenization breaks text into smaller units called tokens, which the model can process. Embeddings convert these tokens into numerical vectors that represent meaning, allowing LLMs to understand relationships between words in context.

- 4. Prompt Engineering: Prompt engineering is the process of designing effective inputs to guide LLMs in generating accurate and useful outputs. Well-structured prompts improve response quality and help control the behavior of models like GPT.

- 5. Fine-Tuning and Customization: Fine-tuning involves training a pre-trained LLM on specific domain data to improve performance for particular tasks. This helps customize models for industries like healthcare, education, or customer support.

- 6. Applications and Challenges of LLMs: Large Language Models are used in chatbots, content creation, translation, and coding assistance. However, they also face challenges like bias, hallucinations, and high computational costs, which must be carefully managed for reliable use.

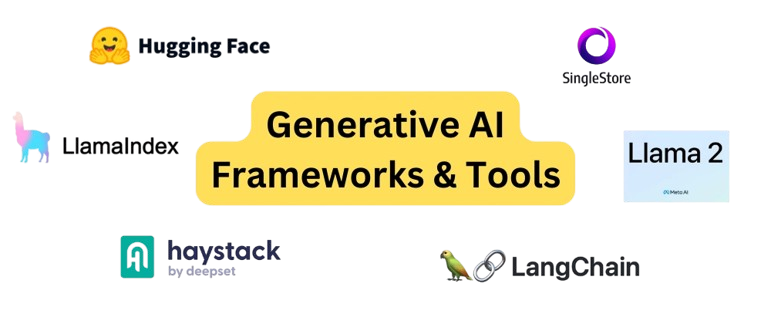

Tools and Frameworks

1. TensorFlow

2. PyTorch

3. Hugging Face Transformers

4. OpenAI API

5. LangChain

6. Stable Diffusion

Building Your First Generative AI Project

Building your first Generative AI project is an exciting step that helps you understand how AI models are created, trained, and used in real-world applications, including areas like Object Detection. The process begins with selecting a problem statement such as text generation, chatbot development, image creation, or Object Detection tasks. Next, you set up the development environment using tools like TensorFlow or PyTorch, along with necessary libraries and APIs. You then collect and preprocess data to ensure high-quality inputs for effective learning. After that, you choose a suitable model architecture, such as transformer-based systems like GPT, and begin training. The model is evaluated and fine-tuned to improve performance before being deployed as a web app, chatbot, or API, enabling real-world interaction and practical learning.

Want to Master Gen AI? Explore the Gen AI Master Program Offered at ACTE Today!

Ethics, Risks, and Future of Generative AI

Ensures fairness, transparency, and accountability in AI systems: Ethical Generative AI focuses on reducing bias in data and outputs to ensure fair results for all users. Transparency helps people understand how AI decisions are made, while accountability ensures developers take responsibility. This builds trust and supports safe, responsible AI usage in real-world applications.

Risks include misinformation, deepfakes, privacy violations, and misuse: Generative AI models like GPT can create realistic fake content, which may spread misinformation or deepfakes. This can harm trust and security online. Privacy issues may occur if sensitive data is misused. Strong safeguards are needed to prevent unethical or harmful use of AI systems.

High computational requirements and environmental concerns: Training large AI models needs powerful hardware, large datasets, and high energy consumption. This increases costs and creates environmental concerns due to carbon emissions. Smaller organizations may struggle to access such resources, making AI development less accessible without optimization techniques and efficient computing methods.

Future of Generative AI and its impact on industries: The future of Generative AI will focus on safer, faster, and more efficient systems aligned with human values. Improvements will enhance accuracy and reliability across sectors like healthcare, education, and business. It will drive automation, creativity, and innovation while becoming more widely accessible globally.

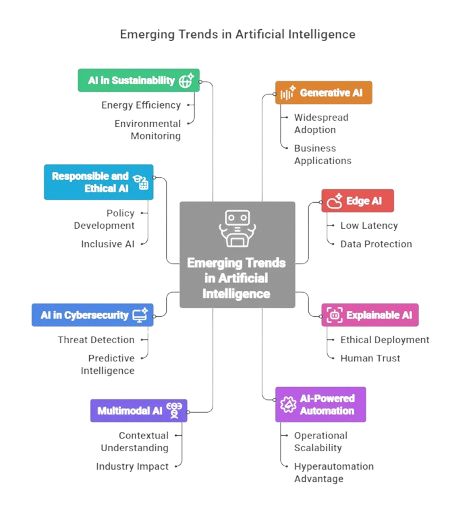

Future Trends in AI

Prompt engineering and optimization techniques are essential skills in Generative AI that focus on designing effective inputs to get accurate, relevant, and high-quality outputs from models like GPT. Prompt engineering involves carefully structuring questions, instructions, or examples so the model clearly understands the task. A well-designed prompt can significantly improve response quality, reduce ambiguity, and guide the model toward desired results such as summarization, content generation, or problem-solving. Optimization techniques further enhance performance by refining prompts through iteration, testing different formats, and adjusting context length, tone, or constraints. Techniques like few-shot learning, chain-of-thought prompting, and role-based prompts help improve reasoning and accuracy in outputs. These methods are widely used in real-world applications such as chatbots, virtual assistants, and content creation tools. As Generative AI continues to evolve, mastering prompt engineering and optimization has become a key part of Gen AI Training, enabling users to efficiently control model behavior and unlock its full potential for various industry use cases.

Conclusion

Generative AI represents a major shift in how machines create and interact with content, enabling systems to generate text, images, audio, and code with remarkable accuracy and creativity. Through concepts like neural networks, transformers, and large language models such as GPT, this technology is transforming industries including education, healthcare, marketing, and software development. However, responsible use is essential to address challenges such as bias, misinformation, and computational costs. As the field continues to evolve, continuous learning and hands-on practice are crucial. Enrolling in Gen AI Training helps learners build strong foundational and practical skills to effectively work with modern generative AI tools and contribute to future innovations in this rapidly growing domain.

LMS

LMS