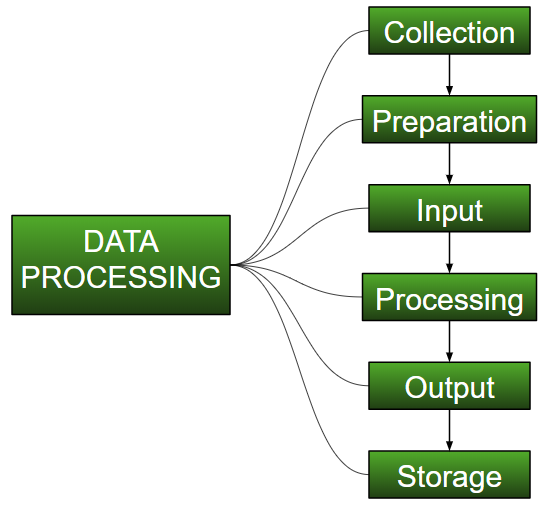

Data processing occurs when data is collected and translated into usable information. Usually performed by a data scientist or team of data scientists, it is important for data processing to be done correctly as not to negatively affect the end product, or data output.

- Introduction Of Data Processing

- Methods Of Data Processing

- Examples Of Data Processing

- Tools For Data Processing

- Features Of Data Processing

- How It’s Work?

- Why Data Processing?

- Trends In Data Processing

- Data Processing In Research Area

- Advantages Of Data Processing

- Disadvantages Of Data Processing

- Application Of Data Processing

- Conclusion Of Data Processing

INTRODUCTION OF DATA PROCESSING

Data in its uncooked shape isn’t beneficial to any enterprise. Data processing is the technique of accumulating uncooked records and translating it into usable information. It is commonly completed in a step-via way of means of-step technique via way of means of a group of records scientists and records engineers in an enterprise. The uncooked records is collected, filtered, sorted, processed, analyzed, stored, after which supplied in a readable layout.Data processing is important for businesses to create higher commercial enterprise techniques and boom their aggressive edge.

METHODS OF DATA PROCESSING

- In this records processing approach, records is processed manually. The complete procedure of records collection, filtering, sorting, calculation and different logical operations are all performed with human intervention with out using every other digital tool or automation software. It is a low-price approach and calls for little to no tools, however produces excessive errors, excessive hard work prices and masses of time.

- Data is processed robotically via using gadgets and machines. These can consist of easy gadgets which includes calculators, typewriters, printing press, etc. Simple information processing operations may be performed with this technique. It has a whole lot lesser mistakes than guide information processing, however the growth of information has made this technique greater complicated and difficult.

- Data is processed with cutting-edge technology the use of records processing software program and programs. A set of commands is given to the software program to technique the records and yield output. This technique is the maximum pricey however gives the quickest processing speeds with the very best reliability and accuracy of output.

There are 3 primary records processing methods:-

Manual Data Processing:

Mechanical Data Processing:

Electronic Data Processing:

EXAMPLES OF DATA PROCESSING

- A inventory buying and selling software program that converts tens of thousands and thousands of inventory statistics right into a easy graph.

- An e-trade organization makes use of the hunt records of clients to advocate comparable products.

- A virtual advertising organization makes use of demographic statistics of human beings to strategize location-unique campaigns.

- A self-riding vehicle makes use of real-time records from sensors to discover if there are pedestrians and different vehicles at the road.

Data processing happens in our each day lives whether or not we can be aware about it or not. Here are a few real-lifestyles examples of statistics processing:-

TOOLS FOR DATA PROCESSING

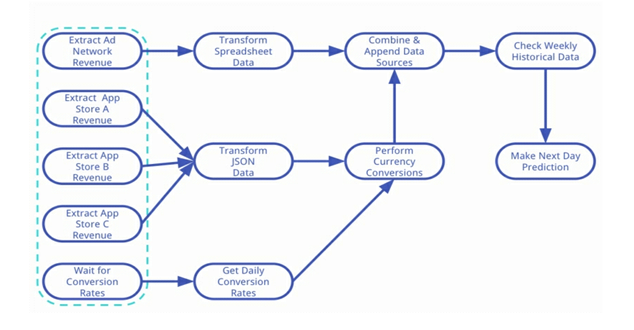

- Stream Processing: This kind of processing desires to address massive quantities of real-time records. Applications like sensors withinside the industry, on-line streaming and log report processing calls for real-time processing of massive records. The stay processing of large records calls for much less latency even as processing big records. The Mapreduce version handles this efficaciously through offering excessive latency because the map segment records want to be stored at the disk earlier than the lessen segment begins, this results in greater put off and makes it now no longer viable for records processing in real-time.

- Batch Processing: Apache Hadoop is called the maximum dominant device for batch processing utilized in huge statistics. It is extensively used amongst special domain names together with statistics mining and device learning. It balances the burden through dispensing it thru special machines. It capabilities extraordinarily properly in processing huge statistics as it’s miles especially designed for batch processing.

- Interactive Processing: The interactive evaluation gear permit person to engage with records and make records evaluation of their very own way. In this sort of processing, person could make interactions with the laptop as they may be at once related to it.

Using conventional gear can not prepare the analytics of huge records, as a result few of the to be had gear are mentioned below. The gear of huge records are prominent into 3 major classes they are:-

STREAM PROCESSING TOOLS

Apache Storm:

- This is one of the Most famous movement processing platforms, it’s far scalable, open source, fault tolerant and allotted for limitless facts streaming. It is advanced specifically for streaming facts that is easy to perform and makes positive all of the facts is processed. It methods tens of thousands and thousands of facts every 2d which makes it and green platform for facts streaming.

- This is any other shrewd and actual-time platform beneficial in having access to massive records to retrieve facts produced with the aid of using machines. It allows customers to monitor, get admission to and examine records thru an internet interface. The consequences are represented thru reports, indicators and graphs. The particular traits of splunk like indexing of established and unstructured records, developing dashboards, on line looking and actual time reporting makes this device special from different circulation processing tools.

Splunk:

BATCH PROCESSING TOOLS

Mapreduce Model:

- Hadoop which is largely a software program platform evolved for allotted statistics-extensive applications. It makes use of mapreduce as a computational paradigm. Google and different net corporations have evolved Mapreduce, that is a programming version beneficial in analyzing, processing and producing large statistics sets. It breaks a complicated hassle into subproblems and keeps this system until each subproblem is dealt with directly.

- It is a programming version which has the functionality to technique packages in each parallel and dispensed ways. It has the capacity of processing from small cluster to very huge cluster. It uses the technique of cluster to technique and execute in a dispensed manner. With the assist of Dryad framework programmers can paintings on as many machines as they can, even having a couple of cores and processors.

- This device affords the power of graphical interface to the customers to visually examine data. Apache Hadoop brought Talend as an open supply software. Unlike Hadoop, customers have the benefit of fixing issues with out the want of writing java code. Moreover, customers have the drag and drop alternative of icons in line with their described tasks.

Dryad:

Talend Open Studio:

INTERACTIVE ANALYSIS TOOLS

Google’s Dremel:

- It become proposed via way of means of a well-famend business enterprise Google that helps interactive processing. Dremel’s structure could be very exclusive from Apache Hadoop that become advanced for batch processing. Additionally, it has the capacity to run a collection of queries in seconds over a desk that has trillions of rows with the assist of column facts and multi-stage trees. It additionally helps loads of processors and might accommodate petabytes of facts of heaps of Google’s users.

- A dispensed platform which helps processing of interactive evaluation of large information is referred to as Apache Drill. It is greater bendy while as compared to Google’s dremel in phrases of assist for unique question languages, numerous reassets and information types. Drill is aimed to address hundreds of servers, to manner trillions of person information and might manner petabytes of information in a totally little time.

Apache Drill:

FEATURES OF DATA PROCESSING

- The impact of the report period on the extent of digitizing noise history is considered; a extraordinary technique is recommended for digitizing and connecting the successive sections of these lengthy length statistics requiring repositioning at the digitizer table.

- The technique for device correction at each excessive and occasional frequency bands is ready for accelerograms recorded via way of means of the accelerographs along with pendulum-galvanometer system.

- In contrast with the band-byskip clear out out parameters counseled via way of means of Trifunac et al., pretty extraordinary cut-off frequencies for low-byskip and excessive-byskip filters are used and regulations at the ∫LC, the cut-off frequency for excessive-byskip clear out out, which include digitizing noise history.

The important capabilities of strong-movement statistics processing processes utilized in China are as follows:

HOW IT’S WORK?

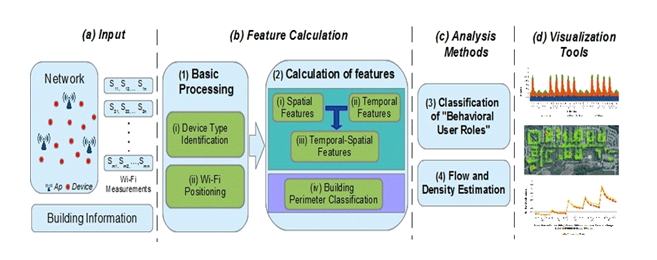

Statistics processing, manipulation of statistics with the aid of using a computer. It consists of the conversion of uncooked statistics to machine-readable form, go with the drift of statistics via the CPU and reminiscence to output devices, and formatting or transformation of output. Any use of computer systems to carry out described operations on statistics may be protected below statistics processing. In the economic world, statistics processing refers back to the processing of statistics required to run businesses and businesses.

WHY DATA PROCESSING?

Importance of facts processing consists of multiplied productiveness and profits, higher decisions, greater correct and reliable. Further price reduction, ease in storage, dispensing and document making observed with the aid of using higher evaluation and presentation are different advantages. The want to method facts is now extensively found out and meditated in each subject of paintings. Let the paintings be performed in a commercial enterprise surroundings or for instructional studies purpose, facts control structures are utilized by each commercial enterprise.

TRENDS IN DATA PROCESSING

- The pandemic became like tossing fuel on an already burning hearthplace on the subject of agency adoption of cloud-primarily based totally facts resources. Suddenly, hundreds of thousands of employees had to get admission to organization facts and collaborate remotely, and cloud-primarily based totally answers had been frequently the clean winner.Hybrid and multi-cloud approaches, in particular, had been key drivers of cloud facts control strategies

- This information control fashion is the continuation of a fashion that has been rising for numerous years, more often than not pushed through huge information concerns. The unparalleled quantity of information companies are confronted with dealing with is colliding with an ongoing staffing scarcity throughout the tech enterprise as an entire and in particular when it comes to information-centered roles.

- AI and gadget learning (ML) introduce rather treasured automation to guide strategies which have been at risk of human error. Foundational information control obligations like information identity and type may be dealt with extra efficaciously and as it should be through superior technology withinside the AI/ML space.

- By the quit of 2021, augmented records control may want to lessen guide records control obligations through 45%, in step with Gartner. Considering the exponential boom of records volumes and a shrinking pool of records technological know-how talent, the significance of this development might be difficult to overstate.

- When organizations do manipulate to snag records technological know-how professionals, they need to get the maximum from their abilties as opposed to teaching them to paintings on guide obligations like records cleaning. Augmented records control answers ingest, store, organize, and preserve records, frequently via AI and ML. Manually excessive obligations like records instruction and records cleaning may be done with augmented records approaches.

Hybrid And Multi-Cloud Data Strategies:-

AI And ML :-

Augmented Data Analytics:-

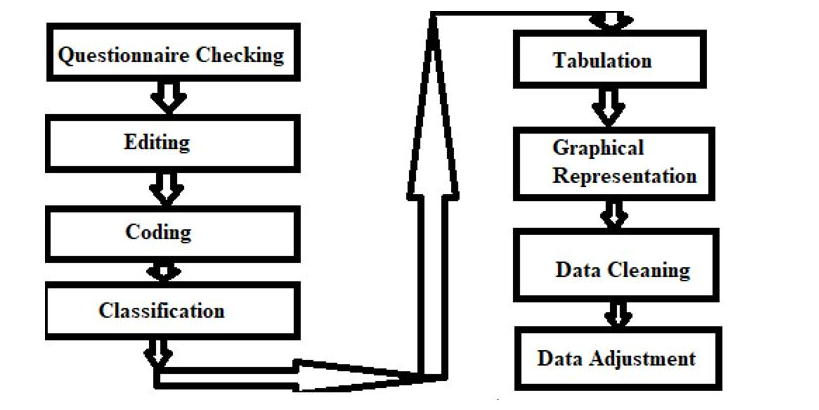

DATA PROCESSING IN RESEARCH AREA

- Questionnaire checking: The first step is to test if there are any questionnaires or no. Few of now no longer appropriate questionnaires are incomplete or partial data, insufficient knowledge.

- Editing information is recognized if there are any mistakes in uncooked information in order that if they’re mistakes they may be edited and corrected.

- Coding is the system of giving symbols in order that responses may be positioned into their respective groups.

- Classification of information is primarily based totally on instructions like magnificence interval, frequency or attributes just like the city, the populace is completed for higher understanding.

- After classifying we tabulate the whole system in specific applicable columns and rows.

- Then constitute them in graphical or statistical bar chart format.

- After that, we take a look at the whole records another time from first if there’s any missing records, we upload it up for consistency.

- An extra idea of records adjusting is finished as complementary to enhance quality.

The crucial steps particularly consist of on this processing are as follows:-

ADVANTAGES OF DATA PROCESSING

- Highly efficient

- Time-saving

- High speed

- Reduces errors.

The blessings of statistics processing are:-

DISADVANTAGES OF DATA PROCESSING

- Large strength consumption.

- Occupies big memory.

- The price of set up is high.

- Wastage of memory.

The negative aspects of statistics processing are:-

APPLICATION OF DATA PROCESSING

- In the banking sector, this processing is utilized by the financial institution clients to affirm there, financial institution info, transaction and different info.

- In instructional departments like schools, colleges, this processing is relevant in locating scholar info like biodata, class, roll number, marks obtained, etc.

- In the transaction process, the utility updates the records whilst customers request their information.

- In a logistic monitoring area, this processing enables in retrieving the specified client records online.

- In hospitals patients, information may be without problems searched.

The utility of statistics processing is:-

CONCLUSION OF DATA PROCESSING

It is the conversion of the information to beneficial information. The information processing is extensively divided into 6 primary steps as Data collection, garage of information, Sorting of information, Processing of information, Data analysis, Data presentation, and conclusions.