Data Science

- Data science is all about data, and I’m pretty sure you already knew that. But did you know that we use data science to make business decisions?

- I’m pretty sure you knew that as well. So what else is new here?

- Well, do you know how data science is used to make business decisions?

- No? Let’s look at that then.

- We all know that every single tech company out there is collecting huge amounts of data. And data is revenue. Why is that?

- That’s because of data science. The more data you have, the more business insights you can generate. Using data science, you can uncover patterns in data that you didn’t even know existed.

- For example, you can discover that some guy who went to New York City for a vacation is most likely to splurge on a luxury trip to Venice in the next three weeks.

- That’s an example that I just made up, might not be true in the real world. But if you’re a company offering luxury tours to exotic destinations, you might be interested in getting this guy’s contact number.

- Data science is being used extensively in such scenarios. Companies are using data science to build recommendation engines, and predicting user behaviour, and much more.

- All of this is only possible when you have enough amount of data so that various algorithms could be applied on that data to give you more accurate results.

- There is also something called as prescriptive analytics in data science, which does pretty much the same predictions that we talked about in the rich tourist example above.

- But as an added benefit, prescriptive analytics will also tell you what kind of luxury tours to Venice a person might be interested in.

- For example, one person might want to fly first class but would be fine with a three star accommodation, whereas another person could be ready to fly economy but definitely needs the most luxurious stay and cultural experience.

- So even though both these people will be your rich clients, both of them have different requirements. So you can use prescriptive analytics for this.

- You might be wondering, hey, that sounds a lot like artificial intelligence. And you’re not entirely wrong, actually. Because running these machine learning algorithms on huge datasets is again a part of data science.

- Machine learning is used in data science to make predictions and also to discover patterns in the data. This again sounds like we’re adding intelligence to our system. That must be artificial intelligence. Right? Let’s see.

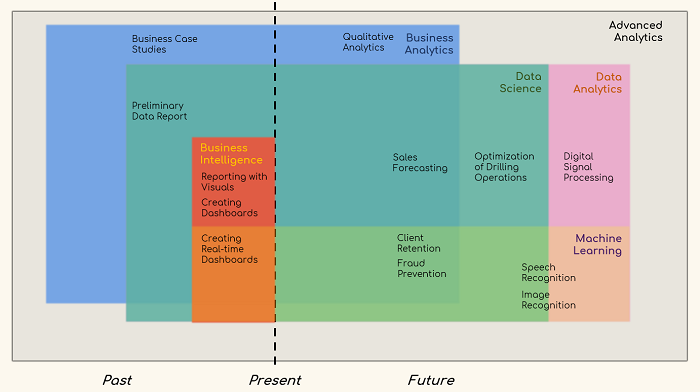

Data Analyst

- A data analyst is usually the person who can do basic descriptive statistics, visualize data, and communicate data points for conclusions.

- They must have a basic understanding of statistics, a perfect sense of databases, the ability to create new views, and the perception to visualize the data. Data analytics can be referred to as the necessary level of data science.

Machine Learning

- Let’s talk about machine learning now. Machine Learning (ML) is considered a sub-set of AI. You can even say that ML is an implementation of AI.

- So whenever you think AI, you can think of applying ML there. As the name makes it pretty clear, ML is used in situations where we want the machine to learn from the huge amounts of data we give it, and then apply that knowledge on new pieces of data that streams into the system.

- But how does a machine learn, you might ask.

- There are different ways of making a machine learn. Different methods of machine learning are supervised learning, non-supervised learning, semi-supervised learning, and reinforced machine learning.

- In some of these methods, a user tells the machine what are the features or independent variables (input) and which is the dependent variable (output). So the machine learns the relationship between the independent and dependent variables present in the data that is provided to the machine. This data which is provided is called the training set.

- And once the learning phase or the training is complete, the machine, or the ML model, is tested on a piece of data which the model has not encountered before.

- This new dataset is called the test dataset. There are different ways in which you can split your existing dataset between the training and the test dataset.

- Once the model is mature enough to give reliable and high accuracy results, the model will be deployed to a production setup where it will be used against absolutely new datasets for problems such as predictions or classification.

- There are various algorithms in ML which could be used for prediction problems, classification problems, regression problems, and more.

- You might have heard of algorithms such as simple linear regression, polynomial regression, support vector regression, decision tree regression, random forest regression, K-nearest neighbours, and the like. These are some of the common regression and clustering algorithms used in ML.

- There are many more as well. And there are a lot of data preparation or pre-processing steps you need to take care of even before training your model.

- But ML libraries such as SciKit Learn have evolved so much that even an app developer without any background in mathematics or statistics, or even a formal AI education, can start using these libraries to build, train, test, deploy, and use ML models in the real world.

- But it always helps to know how these algorithms work, so that you can make informed decisions when you are to select an algorithm for your problem statement. With this knowledge of ML, let’s talk a bit about deep learning now.

Machine Learning modeling starts with the data exist and typical components are as follows :

- Understand problem – Make sure an efficient way to solve the problem is ML. Note that not all problems solvable using ML.

- Explore Data – To get an intuition of features to be used in ML model. This might need more than one iteration. Data visualization plays a critical role here.

- Prepare data – This is an important stage with a high impact on the accuracy of ML model. It deals data issue like what to do with missing data for a feature? Replace with dummy value like zero, or mean of other values or drop the feature from model?. Scaling features, which make sure values of all features are in same range, is critical for many ML models. A lot of other techniques like polynomial feature generation is also used here to derive new features.

- Select a model and train – Model is selected based on a type of problem ( Prediction or classification etc. ) and type of feature set ( some algorithms works with a small number of instances with a large number of features and some others in other cases).

- Performance Measure – In Data Science, performance measures are not standardized, it will change case by case. Typically it will be an indication of Data Timeliness, Data Quality, Querying Capability, Concurrency limits in data access, Interactive visualization capability etc

In ML models, performance measures are crystal clear. Each algorithm will have a measure to indicate how well or bad the model describe the training data given. For example, RME(Root Mean Square Error) is used in Linear Regression as an indication of an error in model.

- Development methodology – Data Science projects are aligned more like an engineering project with clearly defined milestones.

- But ML projects are more of research like, which start with a hypothesis and trying to get it proved with data available.

- Visualization – Visualisation in general Data Science represents data directly using any popular graphs like bar, pie etc. But in ML, visualizations also used represents a mathematical model of training data.

- For example, visualizing confusion matrix of a multiclass classification helps to quickly identify false positives and negatives.

- Languages – SQL and SQL like syntax languages (HiveQL, Spark SQL etc) are the most used language in Data Science world. Popular data processing scripting languages like Perl, awk, sed are also in use.

- Framework-specific well-supported languages are another widely (Java for Hadoop, Scala for Spark etc) used category.